Responsible AI governance: Can you trust your machines?

Summary

Responsible AI governance is the framework that ensures AI systems are reliable, fair, and aligned with business and regulatory expectations. Moving beyond basic compliance, it involves clear ownership, documented decision-making, explainability, and continuous monitoring across the AI lifecycle. The article highlights common gaps organizations face—such as lack of fairness testing and oversight—and emphasizes that strong governance is not just about risk mitigation, but a strategic advantage that enables faster, safer, and more confident AI adoption.

In 2018, a major European bank deployed a credit-scoring model that approved loans at a speed no human underwriter could match. By 2020, regulators found it had systematically disadvantaged applicants from certain postcodes. The problem? These postcodes are strongly correlated with an ethnicity. The model was catastrophically ungoverned.

This pattern has been repeated across different domains, hundreds of times since. For instance, we've read about an AI recruitment tool that filtered out women, a predictive policing system that over-surveilled minority neighborhoods, and a healthcare triage algorithm that under-prioritised people of certain ethnicities. In each case, the failure was in the absence of the structures, processes, and accountabilities that should have surrounded that code from the day one.

Responsible AI governance is the discipline that fills that absence. And it has become one of the strategically important capabilities an organization can build.

Let's decode AI governance: What it is and what it is not

First things first, AI governance is not the same as AI compliance. Compliance is a reactive approach where you meet a regulatory standard, check a box, and move on. Governance, on the other hand, is the set of principles, processes, roles, and technical controls that ensure AI systems behave as intended, remain aligned with organizational values, and are correctable when they don't.

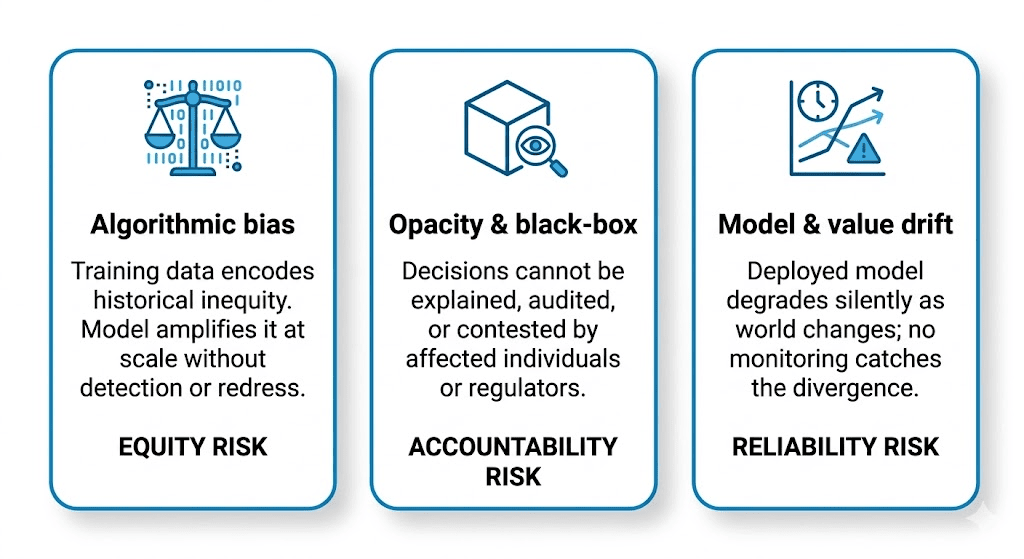

Here are three failure models of AI that call for AI governance:

These three failure modes aren't hypothetical. They're well-documented, frequently litigated, and increasingly subject to hard regulatory enforcement. The EU AI Act, in force since 2024, imposes mandatory conformity assessments, transparency obligations, and human oversight requirements on high-risk AI systems, with fines of up to 7% of global annual turnover for violations. The question is no longer whether to govern AI; it's whether you'll build governance proactively or have it imposed on you reactively.

In such cases, governance becomes an enabler. It lets organizations deploy AI faster and with more confidence, because they've built the internal scaffolding to catch problems early rather than manage disasters late.

The four pillars of mature AI governance

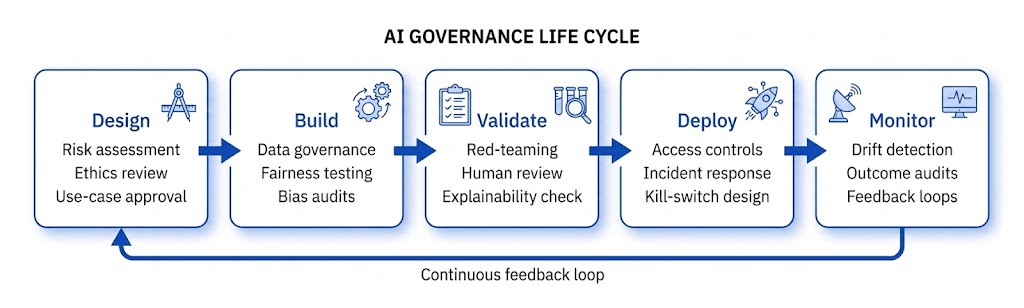

Mature AI governance is a set of interlocking capabilities that operate continuously across the entire AI life cycle, from ideation and deployment to decommissioning.

There are four pillars for this life cycle:

Pillar 1: Accountable ownership

Every AI system needs a named owner who is responsible for its behavior in production and not just at launch. This is distinct from the engineering team that built it. In practice, this means establishing an AI risk committee (or equivalent) with representation from legal, compliance, data science, product, and affected business domains. The committee approves high-risk deployments, reviews audit findings, and has authority to suspend systems when risks materialize.

Pillar 2: Documented decision trails

Every significant decision made during a model's development, such as which dataset was chosen and why, what fairness metric was optimized, and what trade-offs were accepted, should be captured in a living document, often called a model card or AI fact sheet. These aren't compliance artifacts gathering dust. They're the institutional memory that lets you answer a regulator's question, diagnose a production failure, or onboard a new team member.

Pillar 3: Explainable by design

The right to explanation is becoming increasingly codified in law such as GDPR Article 22, the EU AI Act, and various sector-specific regulations. These all require that individuals affected by automated decisions can receive a meaningful account of the logic involved.

However, for businesses, this has real architectural implications: interpretable models, such as logistic regression, decision trees, and gradient-boosted models, are preferable to black-box deep learning where decisions have legal or high-stakes consequences.

When you do use complex models, you need a post hoc explanation layer built into the system from day one, not bolted on after an audit flag.

Pillar 4: Continuous monitoring and drift detection

A model validated against 2023 data and still running in 2026 on unmonitored production traffic is a liability waiting to be discovered. Data drift, concept drift, and fairness drift are all real phenomena that degrade models silently. Monitoring must track not just accuracy but equity metrics, such as equal opportunity, demographic parity, and predictive parity on an ongoing basis, with alert thresholds and defined remediation paths.

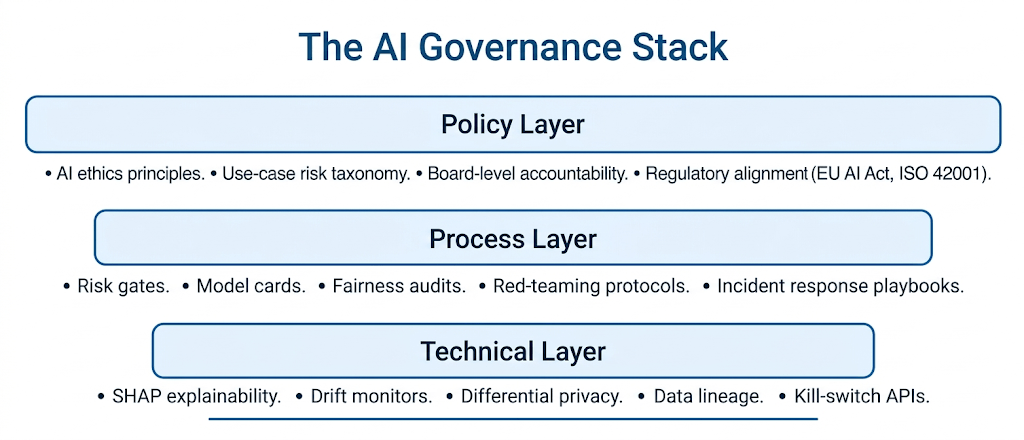

The AI governance stack: From policy to code

Responsible AI governance operates at three levels simultaneously, and all three must be present for the system to hold.

The three layers are mutually reinforcing and mutually load-bearing.

What most organizations are getting wrong with AI governance

In practice, most enterprises fall into one of two traps. The first is compliance-only governance: hiring a chief AI ethics officer, publishing a principles document, and calling it done. The second is governance plays: elaborate process documentation that has no real relationship to how models are actually built and deployed. Both leave the organization exposed.

| Common gap | What it looks like | Risk level |

|---|---|---|

| No pre-deployment fairness testing | Models assessed only on aggregate accuracy; no demographic breakdown | High |

| Undocumented data lineage | Training data provenance unknown; scrubbing historical bias impossible | High |

| No post-deployment monitoring | Model treated as static artifact; drift goes undetected for months | High |

| Ethics review as a checkbox | Review happens after build with no authority to stop deployment | Medium |

| No human oversight for high-stakes decisions | Fully automated decisions in hiring, lending, or healthcare triage | High |

| Vendor AI treated as black box | Third-party models deployed without governance requirements passed through production | Medium |

Each of these gaps is fixable but only with a realistic enterprise strategy. The technical solutions exist. What's usually missing is the organizational structure to mandate, resource, and enforce them.

Responsible AI governance as a competitive advantage

Consider what governance actually delivers: faster procurement cycles because enterprise customers have confidence in your risk posture; lower regulatory exposure in an environment of rapidly tightening oversight; reduced reputational risk from model failures that make front pages; and the internal trust required to get high-stakes AI initiatives past legal and compliance in the first place.

The organizations that have invested in this infrastructure with rigorous model cards, pre-deployment fairness audits, interpretability-by-design, and real-time monitoring aren't just safer. They're faster, because they've built the institutional confidence to deploy without hand-wringing at every step.

Subscribe to CXO Focus for insights that help you transform your enterprise IT strategy!