Digital twins: The next frontier in intelligent enterprise operations

Summary

This article explains how digital twins are transforming enterprise operations by creating real-time, data-driven replicas of physical systems that enable prediction, optimization, and smarter decision-making. It outlines what digital twins are, how they work, their strategic benefits for CXOs, and how they differ from traditional simulation models. The article also highlights real-world applications across maintenance, planning, efficiency, innovation, and risk management, while addressing the practical challenges of implementation and offering a clear roadmap for organizations to adopt digital twin technologies at scale.

In an era where IT and physical operations are converging faster than ever, organizations are looking for smarter ways to predict failures, optimize performance, and make data-driven decisions at scale. Digital twins have emerged as one of the most transformative technologies in this shift, providing real-time, intelligent replicas of systems that help leaders simulate outcomes, reduce risk, and drive operational excellence. For CXOs navigating complex, hybrid environments, digital twins offer a powerful pathway to enhanced visibility, predictive insight, and strategic optimization.

What is a digital twin?

A digital twin is a real-time virtual replica of a physical asset, system, or process that continuously synchronizes with its real-world counterpart through data, analytics, and automation. Unlike static models, digital twins evolve dynamically as conditions change, providing deep operational visibility, predictive intelligence, and a safe environment for simulation.

For example, consider a large data center where sensors track temperature, power consumption, airflow, and server loads in real time. A digital twin of this environment continuously mirrors these conditions and simulates how changes such as shifting workloads, adjusting cooling, or adding new hardware will impact performance. This helps teams predict potential hot spots, prevent failures, and optimize energy usage before making any physical adjustments.

Key components of a digital twin architecture

Building a functional digital twin relies on a robust, integrated architecture composed of several critical layers:

Physical entity: This is the real-world asset, system, or environment that the twin mirrors. In IT Operations, this can range from granular components like individual servers and cooling systems to complex structures like entire data centers, hybrid cloud zones, or mission-critical application delivery chains.

Data ingestion layer: This is the high-bandwidth pipeline responsible for gathering operational intelligence. It involves high-volume streams from sources like IoT sensors (for physical assets), telemetry systems, performance logs (metrics, traces, logs), and APIs from existing operational platforms (e.g., CMDBs, ERPs). Data quality and low latency are paramount here.

Digital model & analytics engine: This is the brain of the twin. It uses a combination of techniques to translate raw data into actionable behavior:

Machine learning (AI/ML): Identifies subtle patterns, detects anomalies, and forecasts future states (e.g., Mean Time Between Failure, predicted utilization).

Physics-based modeling: Incorporates known physical laws or engineering specifications to accurately simulate component interaction (e.g., how heat propagates in a server rack).

Statistical algorithms: Used for correlation and root cause analysis across diverse data points.

Integration & feedback loop: This is the connective layer that establishes the crucial two-way communication. It allows the twin to not only receive data but also to send prescriptive insights or automated control signals back to the physical operations, enabling optimization, automated scaling, or proactive maintenance scheduling.

Types of digital twins

Digital twins can be categorized based on their scope and complexity, ranging from granular parts to vast enterprise systems:

Component twins: These are the most granular level, representing individual, often modular, parts. Examples include a virtual model of a CPU, an HVAC cooling component, a specific storage array, or a single valve in an industrial pipeline. They focus on micro-level performance and wear.

Asset twins: These represent a complete, standalone machine or system. Examples include a virtual replica of a server rack, an industrial robotic arm, a specific turbine, or an entire fleet vehicle. They integrate multiple component twins to monitor the asset's overall health and operational status.

System twins: These are models of an entire operational facility or connected network of assets. Examples include a digital replica of a full data center (mirroring power, cooling, and compute capacity), a cloud zone, or an entire manufacturing cell/production line. They focus on system-wide efficiency and dependencies.

Process twins: These move beyond physical assets to represent end-to-end operational workflows. They enable the simulation and optimization of non-physical procedures, such as business processes (e.g., order fulfillment cycle), supply chain logistics, or software deployment pipelines (CI/CD).

Enterprise twins: This is the highest level of complexity, creating an organization-wide digital ecosystem. It spans multiple physical facilities, connects various System Twins, and integrates with key business processes. They are used by CXOs for strategic planning, long-term forecasting, and optimizing enterprise-wide resource allocation.

How digital twins work: The real-time intelligence engine

Digital twins operate through a tightly integrated loop of data capture, modeling, simulation, and continuous synchronization:

Data acquisition and IoT integration: Digital twins begin with continuous, high-resolution data collection from the physical environment. Sensors, IoT devices, APIs, and telemetry agents capture metrics such as temperature, vibration, pressure, power consumption, throughput, and network latency. In IT operations, this includes device telemetry, log streams, SNMP traps, cloud metrics, and application performance data.

This real-time data flow ensures that the twin is always up to date, allowing it to reflect the current operating state and detect any deviation from expected behavior. Modern digital twin platforms integrate directly with IoT hubs, OT systems, and cloud-native observability stacks, creating a unified data foundation for accurate modeling.Data processing and modeling: Once acquired, raw data is ingested into analytics pipelines where it is cleaned, normalized, and correlated. Machine learning models classify patterns, detect anomalies, and learn system behavior over time.

This processed data fuels the construction of the digital representation — whether it's a component, system, or process-level twin. Dependencies, workflows, and interaction patterns are modeled using graph engines, time-series analysis, and physics-based simulations, depending on the environment.

The result is a dynamic, high-fidelity digital replica that mirrors real-world performance and evolves as conditions change.Simulation and visualization: Digital twins enable powerful scenario testing before making real-world changes. Through advanced visual interfaces — including 2D/3D dashboards, topology maps, and predictive analytics views — operations teams and CXOs can simulate the impact of scaling workloads, modifying network paths, changing infrastructure configurations, or introducing new variables.

These simulations help predict failures, evaluate capacity planning decisions, test resilience under stress, and model the downstream effects of operational choices. The ability to “see” the future, rather than react to it, is one of the defining advantages of digital twin technology.- Feedback and control loops: The final stage closes the loop between the physical and digital environments. Insights, alerts, and predictions generated by the digital twin can trigger automated actions in the real world — from adjusting device parameters and modifying workloads to initiating failover processes or optimizing resource allocation.

In IT environments, this can integrate with orchestration systems, AIOps engines, and CI/CD pipelines, enabling automated remediation or intelligent decision support.

This continuous feedback loop creates a self-optimizing ecosystem where physical operations improve through real-time digital intelligence.

Digital twin vs. simulation: Key differences

Before investing in digital twins, many leaders compare them with traditional simulation tools. While both help organizations model and test scenarios, they operate at fundamentally different levels of intelligence, responsiveness, and real-world integration.

Feature | Digital twin | Simulation |

|---|---|---|

Primary goal | Real-time operational intelligence, prediction, and continuous optimization. | Analyze hypothetical scenarios or test outcomes based on fixed input parameters. |

What it represents | A dynamic, always-synchronized virtual replica of a physical system, asset, or process. | A static or semi-static model built to test specific scenarios or assumptions. |

Connection to the real world | Continuous, two-way data exchange with the physical environment through sensors, telemetry, and automation. | No real-time connection; simulations operate on pre-defined datasets without ongoing feedback. |

Data flow | Real-time, high-frequency, bidirectional data. | One-way batch data input. |

Function | Predictive insights, anomaly detection, scenario evaluation, automated or semi-automated corrective actions. | Experimental analysis: tests “what-if” conditions without affecting live operations. |

Why digital twins matter for CXOs: Strategic benefits and real-world impact

As enterprises scale and their ecosystems grow more interconnected, operational complexity increases and blind spots multiply. Digital twins help CXOs shift from reactive firefighting to proactive, intelligence-driven decision-making across infrastructure, operations, and long-term strategy.

Predictive maintenance and reliability engineering: Digital twins continuously monitor assets and detect anomalies early, enabling teams to forecast failures and optimize maintenance cycles. For example, telecom providers use digital twins of network nodes to predict fiber degradation, significantly reducing unplanned outages and improving service reliability.

Data-driven infrastructure and capacity planning: With real-time modeling, organizations can simulate capacity needs for cloud environments, data centers, and enterprise assets before committing resources. Hyperscalers already use digital twins to test cooling and power adjustments ahead of deploying new server clusters, minimizing risks and ensuring efficient scaling.

Operational efficiency and cost optimization: By comparing real-time performance against ideal baselines, digital twins uncover inefficiencies in compute usage, energy consumption, logistics, and industrial processes. Manufacturing plants leverage process twins to optimize throughput, lowering energy costs and reducing cycle times across production lines.

Accelerated innovation and design validation: Teams can virtually experiment with new features, architectural changes, or infrastructure layouts without risking downtime or unnecessary spend. Smart city initiatives apply digital twins to simulate traffic flows, utility loads, and emergency responses, validating designs before physical rollout.

- Enhanced risk management and scenario planning: Digital twins allow leaders to stress-test operations against disruptions such as supply-chain breaks, system failures, cyberattacks, or extreme weather. Financial institutions use digital twins of core IT environments to simulate cyber breach propagation and refine response strategies, strengthening resilience.

Implementation challenges: What CXOs should keep in mind

Despite their transformative potential, digital twins come with practical adoption hurdles:

Data fragmentation and reliability: Twins are only as accurate as the data feeding them. Many enterprises still struggle with siloed telemetry, inconsistent log quality, and gaps across IT/OT systems.

Upfront complexity: Creating high-fidelity models requires domain expertise, strong analytics capabilities, and alignment across engineering, operations, and IT teams. This makes early-stage implementation resource-intensive.

Scaling difficulty: As twins move from single assets to system-wide replicas, data volumes, compute demands, and model maintenance requirements increase sharply.

Skill and process gaps: Organizations often lack unified governance, cross-functional workflows, and teams with the right blend of operational and analytical skills.

Roadmap to adoption: A practical path for enterprises

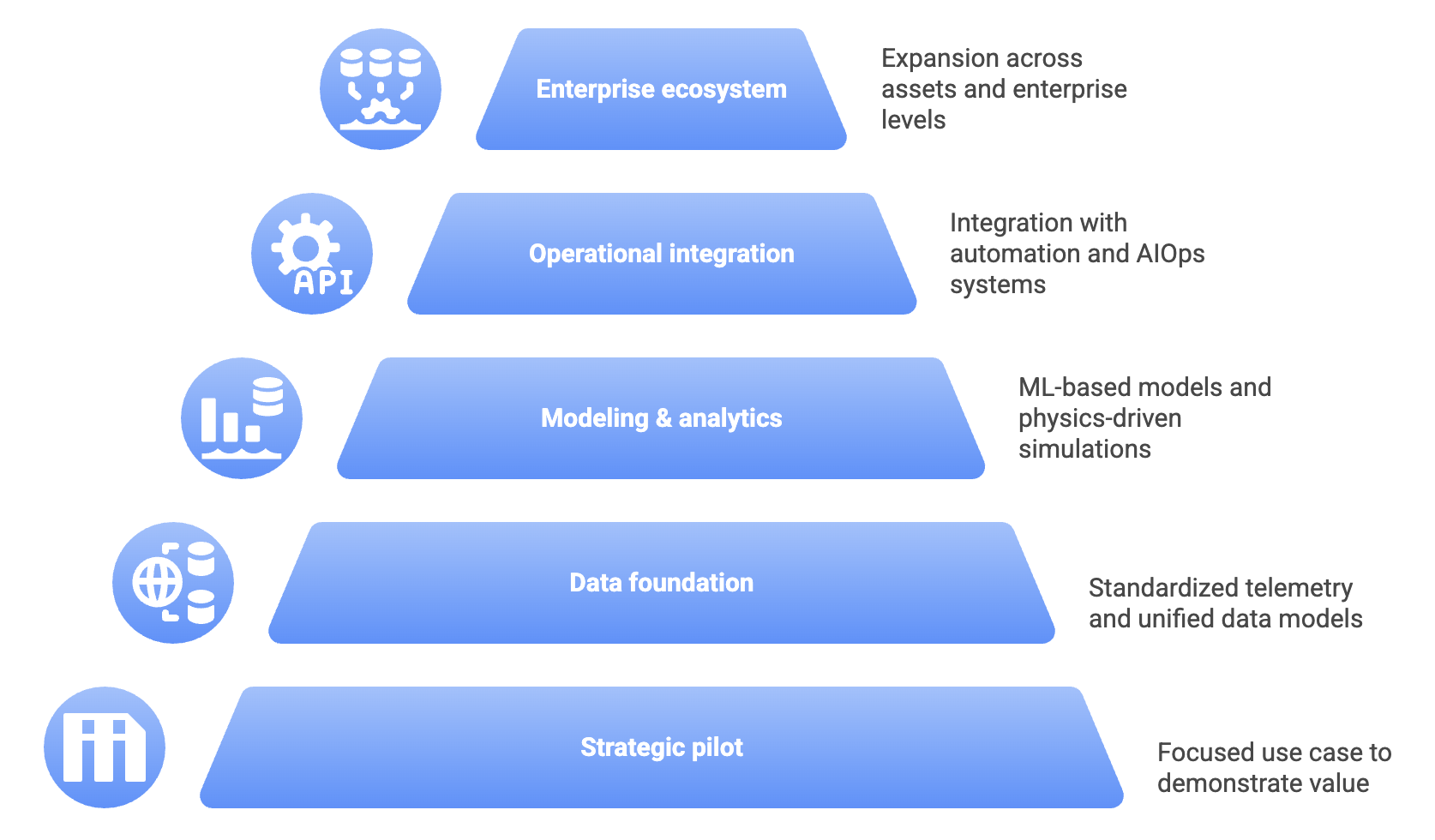

Organizations can unlock the value of digital twins with a phased, deliberate strategy:

Begin with a strategic pilot: Select a focused, high-visibility use case such as a critical asset or process, and demonstrate measurable value and validate feasibility.

Strengthen the data foundation: Prioritize telemetry standardization, unified data models, and integration across sensors, IT systems, and observability tools to ensure high-quality real-time inputs.

Introduce modeling and analytics incrementally: Deploy ML-based behavior models, physics-driven simulations, and dependency mapping as data maturity grows.

Connect the twin to operational systems: Integrate with automation platforms, orchestration tools, or AIOps systems to close the insight-to-action loop.

Scale into an enterprise ecosystem: Expand horizontally across assets and vertically into process and enterprise-level twins, ultimately building a cohesive intelligence layer across the organization.

Future trends: The evolution toward autonomous digital twins

Digital twin technology is accelerating rapidly, and the coming decade will see several transformative shifts:

AI-native autonomous twins: Twins that self-learn, self-correct, and execute optimization actions without human intervention.

Convergence with generative AI: GenAI models generating synthetic scenarios, designing new infrastructure blueprints, or rewriting workflows.

Digital twin as a service (DTaaS): Cloud providers and specialized vendors will offer subscription-based, pre-built DT platforms for common IT services, democratizing access and reducing the high initial barrier to entry.

Enterprise-wide twin ecosystems: Organizations will unify process, asset, and system twins into complete enterprise intelligence platforms.

Twin-driven sustainability: Modeling carbon footprints, energy use, and waste to drive ESG-aligned operational strategies.

Digital twins are quickly evolving from experimental technologies into core enablers of modern enterprise strategy. For CXOs, they offer a powerful way to unify real-time data, simulation, and automation into a single decision-making engine that spans both physical and digital operations. Whether it's reducing downtime, optimizing infrastructure spend, accelerating innovation, or strengthening resilience, digital twins transform how organizations understand and manage complexity at scale.

As the technology matures and integrates more deeply with AI, automation, and next-generation cloud platforms, digital twins will shift from being operational tools to becoming strategic systems of intelligence. Organizations that invest early in this capability will be better positioned to anticipate risks, adapt to change, and build continuously optimized, data-driven enterprises ready for the next wave of digital transformation.