When the internet “forgets” you: Inside the Cloudflare outage

Summary

This article covers the Feb. 20, 2026 Cloudflare outage caused by a BGP routing misconfiguration that made services globally unreachable despite healthy infrastructure. It explains why routing visibility, diversified edge strategies, and control plane monitoring are essential for enterprise resilience.

On Feb. 20, 2026, many IT teams faced one of the most frustrating incident scenarios imaginable:

Everything inside their environment looked perfectly healthy. Hours of troubleshooting showed that there were no CPU spikes, storage failures, application crashes, or DDoS alerts.

Yet users across the world could not connect.

The root cause was a large-scale routing incident involving Cloudflare that made affected services unreachable for roughly six hours.

So what caused this issue?

Let's dive in.

The immediate cause: Disappearing routes

Cloudflare confirmed that the outage was due to a configuration change that caused unintentional withdrawal of customer routes advertised through BGP, particularly for customers using Bring Your Own IP.

But to understand why this is catastrophic, we need to understand how the internet actually finds anything first.

How the internet knows where you are

The internet is not a single network but a federation of thousands of independent networks called autonomous systems (ASs). The protocol that connects them is called the Border Gateway Protocol (BGP).

Think of BGP as the internet’s navigation system:

Each network announces which IP ranges they own.

Other networks learn paths to reach them.

Traffic follows the best known path.

A typical announcement might look like:

203.0.113.0/24 is reachable via AS13335 (Cloudflare)

Routers worldwide store these announcements in routing tables. When a user sends a packet, routers consult those tables to decide where to forward it. If a route is found for the packet's destination, the router forwards it there. If there is no route, then there is no path, and the router drops the packet.

In the case of CloudFlare: Customers delegate their IP ranges to Cloudflare, which then advertises these prefixes globally via its network via BGP.

Traffic flows like this:

Customer IP > Announced by Cloudflare > Internet routes to Cloudflare > Cloudflare forwards to origin

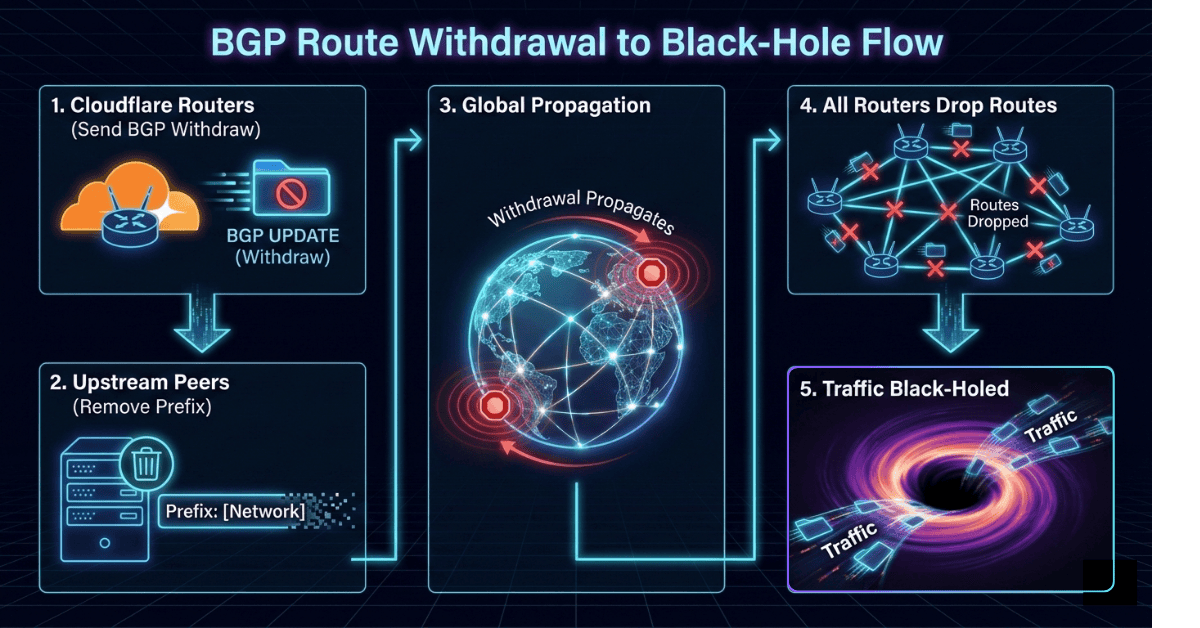

What happens when BGP announcements are withdrawn

The root cause of the Cloudflare outage was that Cloudflare unintentionally withdrew its customers' routes, and their BGP announcements disappeared from the internet.

From the internet’s perspective, those networks no longer existed.

Why services were completely unreachable and not just slow

In many outages, the impact often tells you the root cause by itself. For instance:

| Failure type | Result |

|---|---|

| Server overload | Slow response of applications |

| Packet loss | Intermittent connectivity and communication issues |

| DNS failure | Domain name resolution problems |

Here, due to BGP withdrawal, affected services were not just slow, they were completely invisible to the internet and packets could not reach them.

Reports described services as unreachable from the internet, reflecting a true Layer 3 connectivity loss rather than application failure.

Why the outage spread worldwide so quickly

BGP operates at internet scale. When a major provider changes route advertisements, updates propagate rapidly across thousands of networks.

Cloudflare operates a massive Anycast network:

Hundreds of points of presence

Thousands of BGP peers

Highly automated route control

Automation pushes routing changes fleet-wide.

If a faulty configuration or policy triggers, withdrawal occurs, and every edge router may stop advertising the prefix simultaneously.

This creates a synchronized global outage.

Why recovery took hours

Reversing a routing failure is not as simple as flipping a switch.

Restoration requires:

Identifying missing prefixes

Correcting the configuration or automation issue

Re-advertising routes

Waiting for global convergence

Verifying stability

Even though BGP updates propagate quickly, cautious staged restoration is necessary to avoid flapping or new instability.

What this means for modern enterprise resilience

This incident highlights a hard truth:

Your availability depends on more than your infrastructure. It depends on global routing correctness.

Organizations can invest heavily in redundancy, failover, and uptime—yet they'll still disappear if their network path collapses upstream.

The case for a multi-CDN and multi-provider strategy

Relying on a single edge provider creates a single routing dependency. Failures at the control plane level can bypass traditional redundancy even if the provider is highly reliable. A robust strategy must include:

Multi-CDN deployment : Using multiple providers ensures alternative routing paths, independent control planes, and reduced systemic risk. Traffic steering mechanisms such as DNS, Anycast management, or application-level routing can shift users when one provider fails.

Multi-origin architecture : Separating origin infrastructure across networks prevents cascading failures when edge connectivity breaks.

Independent IP announcements : Where possible, enterprises can maintain the ability to advertise prefixes through alternate providers.

How does ensuring full-stack observability help here?

Most monitoring tools focus on the data plane, such as tracking server health, application performance, and end device availability. They rarely correlate events with the incidents occurring on the control plane. But routing failures occur below those layers.

When packets cannot reach your network, internal telemetry may show everything as healthy. But advanced observability helps you with:

External reachability monitoring: Synthetic probes from multiple global vantage points can detect loss of connectivity even when internal systems appear normal.

Network-level visibility : Flow telemetry, path analysis, and routing intelligence reveal anomalies in traffic patterns and route changes.

Control plane monitoring : Tracking BGP announcements and prefix visibility provides an early warning when your network begins to disappear.

The strategic takeaway for CXOs

This outage underscores a broader reality:

Modern digital services depend on a chain of trust and connectivity across multiple independent systems. Break the chain at the routing layer, and everything above it becomes irrelevant.

Resilience is no longer just about uptime inside your data center or cloud account. It is about survivability across the global internet fabric.

For CXOs, the lesson is clear:

Infrastructure redundancy alone is insufficient.

Edge dependency must be diversified.

Observability must extend to the network control plane.

Reachability is a distinct reliability domain.

To stay in the know of what's new in IT operations, subscribe now to CXO Focus.