Introduction

With the adoption of the cloud increasing each year, securing resources on cloud platforms from attacks is something security admins deal with each day. Because many organizations have some or all of their business-critical applications on the cloud, attacks are becoming more common. One of the biggest data breaches in 2019 happened on the Amazon Web Services (AWS) platform. The attacker was able to exploit a misconfigured web application firewall to access the Social Security numbers, credit scores, and credit card transaction data of more than 100 million customers. This data breach is estimated to have cost Capital One over $300 million in damages.

As the Capital One data breach has shown, a misconfiguration of AWS settings could prove disastrous for any organization. In the shared model of responsibility adopted by AWS, security is jointly handled by the customer and AWS. AWS takes responsibility for the security in the cloud such as storage, databases, and the infrastructure. Security of the cloud, which includes IAM, network, and firewall configurations fall under the responsibility of the customer. This underlines the fact that cloud is only as secure as it is configured.

This e-book provides an overview of the configurations and best practices that can help you defend against attacks, and also offers a few key tips on eliminating security loopholes.

The security architectureof AWS

Understanding the security architecture of AWS is essential for effectively deploying the tools AWS offers to secure the virtual network integrating your on-premises and AWS resources.

Network access control lists

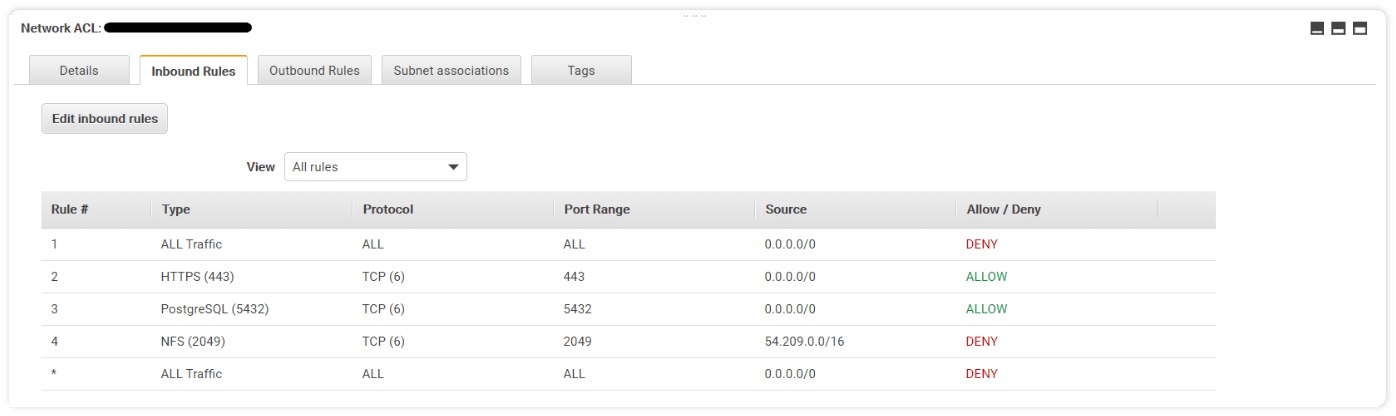

Network access control lists (NACLs) help regulate traffic in and out of each subnet. Having an NACL with a set of granular rules ensures only the authorized traffic flows between subnets. NACLs operate with a list of numbered rules. By default, all custom NACLs deny inbound and outbound traffic. You can add specific rules to allow only the required traffic in and out of the subnet.

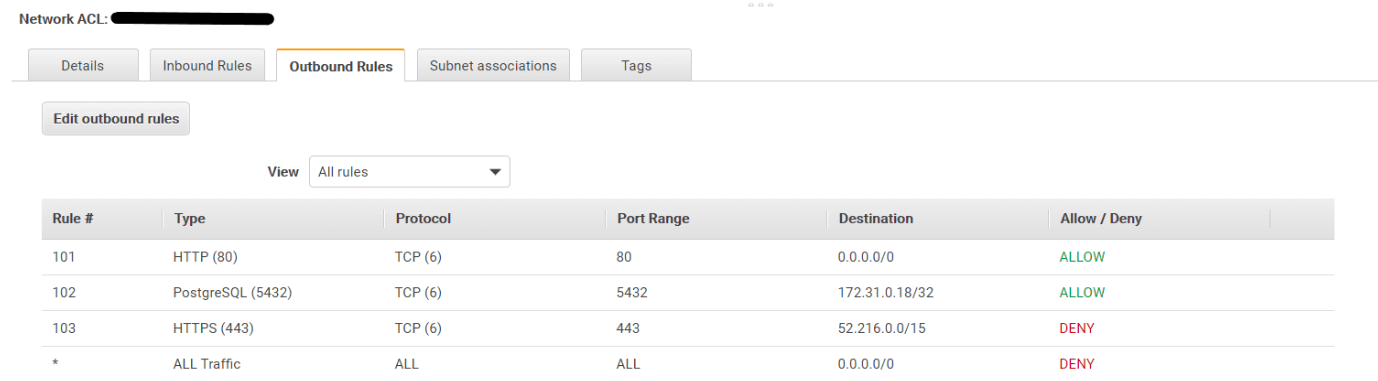

The image below shows an example of how configured rules work in NACLs.

The last rule states that all outbound traffic is denied except for the traffic defined by the rules above. This rule will be present by default when you create an NACL. Rule 103 states that HTTPS traffic is specifically denied for the IPs that fall within the range; 52.216.0.0/15. Rule 102 states that, the port 5432 used to access PostgreSQL is allowed for the IPs falling within the 172.31.0.10/32 range. Similarly, rule 101 states that HTTP access using port 80 is allowed for any IP.

Virtual private cloud and subnets

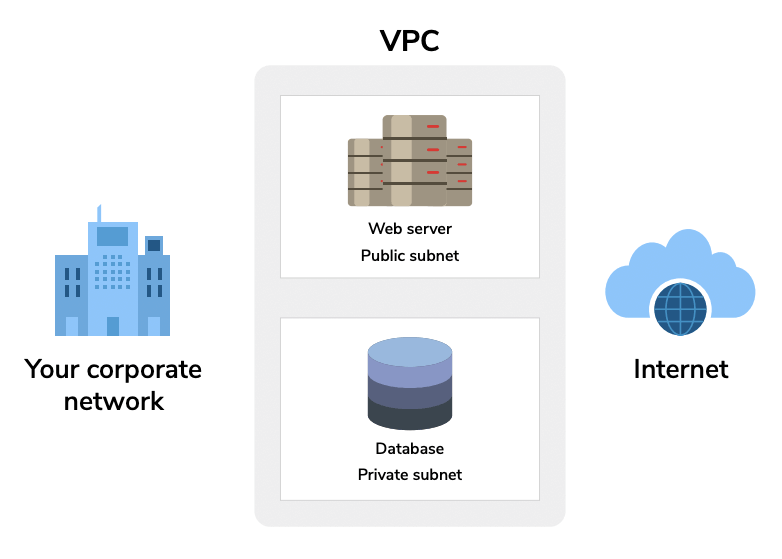

A virtual private cloud (VPC) protects your Amazon Elastic Cloud Compute (EC2) instances from direct access via the internet. With the VPC, you can set rules to restrict the traffic flowing in and out of your virtual network. By default, when a VPC is first created for your account, it is includes a public subnet which allows inbound and outbound traffic. It is advisable to change this, and create a public and a private subnet within your VPC. For instance, you would want the web server to be accessible to the internet, but not the databases. One way to achieve this is to place the web server in a public subnet, and the databases in a private subnet within your VPC.

This way, a VPC also enables you to have logical partitions between the different kinds of resources you have in one cloud platform. A single AWS account allows the creation of up to five VPCs. In addition to a web server and a database server, if you want to use AWS for unstructured object storage, like images or word files, you could create another VPC with only a private subnet. This will ensure that the data you store within the VPC stays isolated from the internet and is accessible only to your organization. Partitioning the resources in your AWS account, based on the traffic you would prefer to allow or deny, brings more structure to your cloud operations. This will also ensure that the data that should be accessible via the internet is easily accessible, while the ones that are critical to your operations remain isolated from the internet and private to your organization.

Configurations to secure your

AWS environment

Adopting critical security configurations can go a long way in fortifying vital applications in your cloud platforms. Here are some examples:

1 Allow Secure Socket Shell (SSH) and Microsoft's Remote Desktop Protocol (RDP) access to only the administrators of a particular subnet. You could do this by defining the IP range in the source section of the NACL. In addition, you could also deny traffic from a particular source or a port.

It could prove disastrous if you accidentally set 0.0.0.0/0 as the source without defining the port range. For instance, consider a rule which states traffic type as "All Traffic", protocol and port range is set as "ALL", and the source is 0.0.0.0/0 and the option is "ALLOW". This means that anyone across the internet can access your critical resources, like a database.

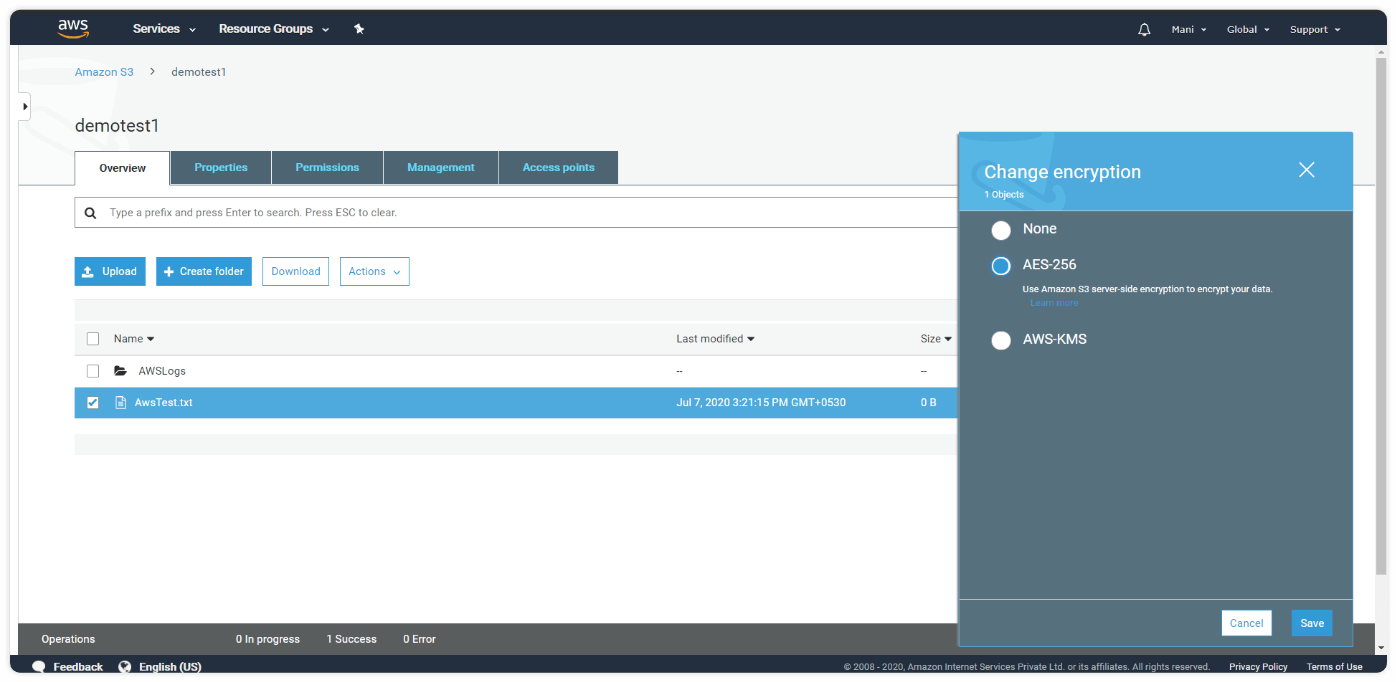

2 Encrypt the objects in your Simple Storage Service (Amazon S3) bucket.

By selecting the option to encrypt objects in your Amazon S3 bucket, your data will still be protected in case of a breach. Amazon S3 buckets are unencrypted by default. You can change this by selecting the encrypt option.

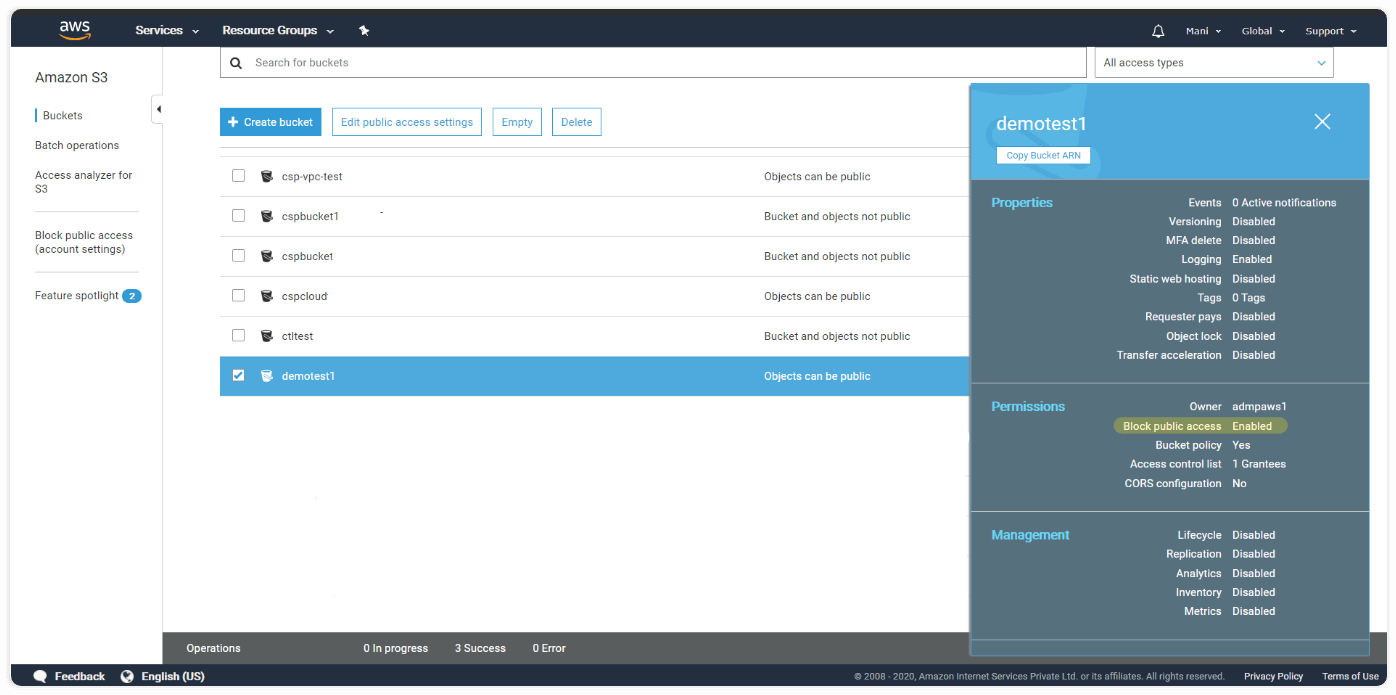

3 Set access to Amazon S3 buckets to private. Not setting the access to private is a common configuration error that results in confidential data being exposed.

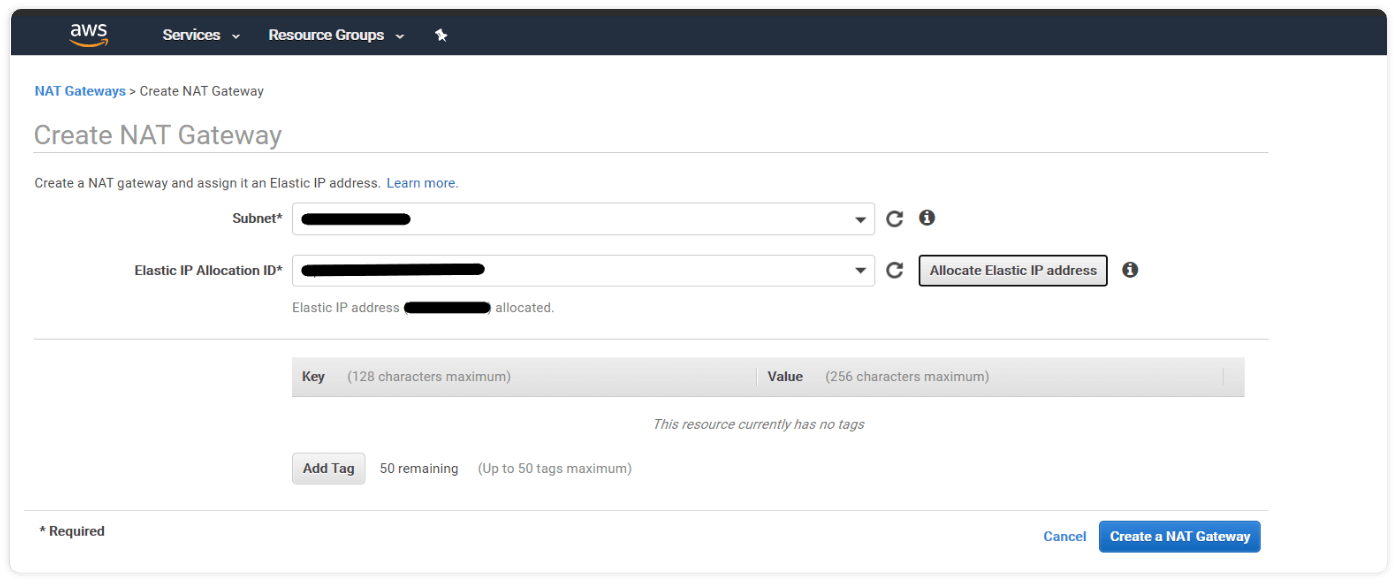

4 Use Network Address Translator (NAT) gateways to provide secure internet access to resources in a private subnet. In case you need internet access to critical resources like Amazon's DynamoDB, configuring a NAT gateway is considered one of the most secure ways to accomplish this.

5 In case you are using AWS for the development and testing of applications, ensure that you use separate VPCs for development, staging, and production.

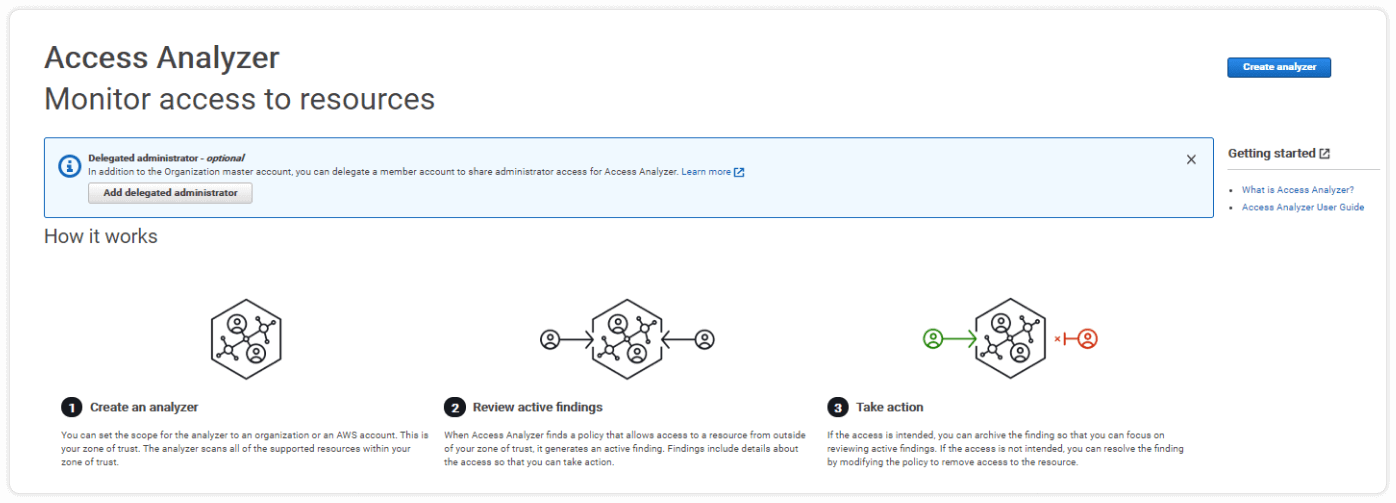

6 Ensure that the principle of least privilege is utilized for identity and access management (IAM) roles. Excessive privileges for a user is a vulnerability. In the IAM console, you can check what actions a user could perform with their level of access. This can be a great tool to analyze and tweak permissions.

7 Ensure that CloudTrail, that allows AWS customers to record application program interface (API) calls and log files to Amazon S3 buckets for storage, is enabled. You could also integrate CloudTrail with Amazon CloudWatch to monitor events, resources and customer applications in your AWS.

8 A root user is a role created when your AWS account is established. It is advisable to create administrator roles for everyday tasks, rather than using the root user account. This is because the root user account comes with complete privileges. Restricting the use of this account can help protect it from misuse.

How a cloud SIEM solution can

help secure your AWS environment

ManageEngine Log360 Cloud, a security information and event management (SIEM) solution in the cloud, can help you manage, monitor, and take control of AWS security. It supports collection of CloudTrail logs, ELB access logs, and S3 logs; aggregates this information; and presents it in the form of actionable reports.

Some key areas that Log360 Cloud can help you monitor:

- Amazon S3 log management

- Amazon S3 bucket logging

- AWS IAM activity reporting

- Auto-configuration of AWS

- AWS ELB traffic analysis

- AWS security group change auditing

- Amazon RDS activity reporting

- Forensic analysis using CloudTrail logs

For events that require immediate attention, Log360 Cloud lets you set up alerts for instant notifications through email or SMS. It also has an end-to-end incident management feature with built-in status tracking and ticket forwarding to facilitate smooth incident detection and resolution.

For a hands-on experience, try Log360 Cloud for free.

Would you like to talk