- Cloud Protection

- Compliance

- Data Leak Prevention

- Bring your own device

- Copy protection

- Data access control

- Data at rest

- Data in transit

- Data in use

- Data leakage

- Data loss prevention

- Data security

- Data security posture management

- Data security breach

- Data theft

- File security

- Incident response

- Indicators of compromise

- Insider threat

- Ransomware attack

- USB blocker

- BadUSB

- USB drop attack

- Data Risk Assessment

- File Analysis

- File Audit

- Threat Glossary

Shadow AI

Key takeaways

- Shadow AI refers to the use of AI tools—chatbots, code assistants, and content generators —by employees without the IT team's approval or oversight.

- Unlike shadow IT, shadow AI introduces unique security risks. Sensitive data entered into these tools may be stored, used for model training, or exposed to third parties outside your control.

- The consequences of shadow AI include data leakage; IP exposure; compliance violations under the GDPR, HIPAA, and SOC 2; and a significantly expanded attack surface.

- An effective defense against shadow AI combines visibility into AI tool usage, category-based access controls, and web filtering.

- ManageEngine DataSecurity Plus gives security teams the cloud app discovery, web filtering, and access control capabilities needed to detect and contain shadow AI activity before it escalates to a breach.

What is shadow AI?

Shadow AI is the unauthorized use of AI tools within an organization without the knowledge, approval, or oversight of IT or security teams. That includes generative AI (GenAI) chatbots like ChatGPT, code assistants like GitHub Copilot, content generation platforms, AI-powered browser extensions, and any AI-integrated SaaS tool an employee adopts on their own.

The intent is rarely malicious. Employees often reach for these tools to work faster—for instance, quickly summarizing documents, drafting reports, debugging code, or analyzing data. Yet in doing so, they run the risk of feeding sensitive company data into systems that operate entirely outside enterprise security controls. There is no vetting, no monitoring, and no visibility. The tool simply works, and the risk compounds in the background. Furthermore, this lack of control creates exploitable gaps where leaked data, exposed credentials, or insecure outputs can be leveraged by attackers to gain unauthorized access and cause a breach.

Shadow AI has accelerated sharply since GenAI entered the mainstream in late 2022. Since then, GenAI web traffic has grown exponentially. In 2024 alone, it grew over 890%. Since most of these tools are browser-based and free or low-cost and require no installation, employees can start using them before the IT team even knows the tool exists.

Why shadow AI matters

An employee adopts an unsanctioned AI tool

01They feed it sensitive data

02The IT team has zero visibility

04Data is processed on external servers

03There's a potential breach or compliance violation

05Shadow IT vs. shadow AI

| Aspect | Shadow IT | Shadow AI |

|---|---|---|

| Definition | Use of technology without IT team approval (e.g., personal cloud storage and unapproved SaaS tools) | A subset of shadow IT involving the unauthorized use of AI tools, especially GenAI |

| Data handling and risks | Data is typically stored or transferred; lower-risk because it is limited to storage or transfer exposure | Data is processed and transformed and may persist beyond control; higher-risk because data may be retained, reused, or used for model training |

| Auditability | Easier to track and audit | Difficult to audit and fully trace data usage |

| Reversibility | Data can usually be removed or controlled | It's hard to reverse once data is processed or learned by models |

| Output behavior | Executes predefined functions; low-risk due to predictable outputs | Generates new content, code, or decisions; high-risk because it may introduce unnoticed errors into workflows |

How employees introduce shadow AI into the workplace

Shadow AI does not arrive through a single entry point. It seeps in through multiple channels, most of them entirely mundane and easily overlooked:

- Direct browser access: Employees visit ChatGPT, Gemini, Perplexity, or similar platforms directly in their browsers. These require no download, installation, or procurement process—just a tab.

- AI-integrated SaaS: Many SaaS platforms have embedded AI features in tools that support design, marketing, development, and HR functions. These features may not have been evaluated when the tool was originally approved.

- Browser extensions: AI-powered writing assistants, market research tools, and grammar tools are available as one-click browser extensions. They often operate across every tab, reading page content in the background.

- Personal accounts on enterprise platforms: An employee may access ChatGPT or Microsoft Copilot using a personal account on a corporate device, bypassing any enterprise-level security controls the organization has in place.

- API integrations: Developers and technically inclined employees may integrate third-party AI APIs directly into internal workflows, creating automated pipelines that feed sensitive data to external models.

The common thread across all of these is low friction. The tools are accessible, fast, and visibly useful. Employees do not actively bypass security controls. Often, they begin using them before controls are even defined.

Shadow AI risks: Why it is a data security problem

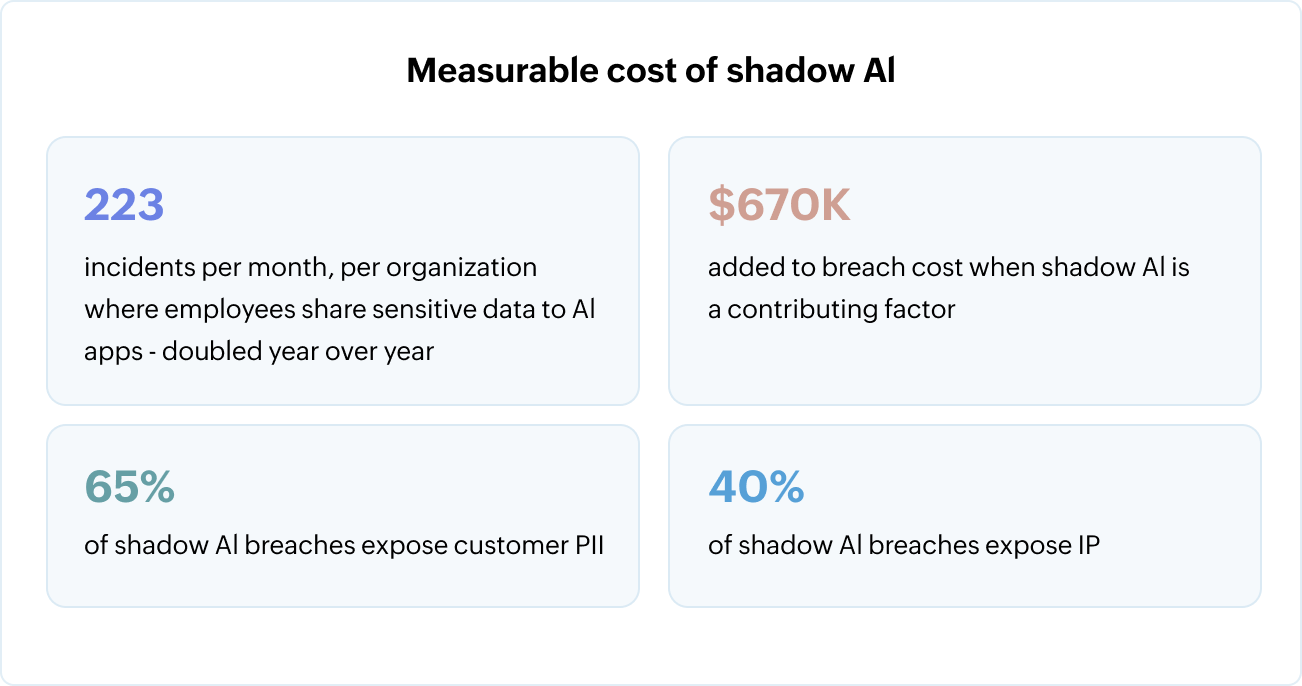

The security implications of shadow AI are not theoretical. Organizations are already facing the risks in the forms of more incidents, higher breach costs, and greater exposure of sensitive data.

Each of these numbers traces back to one of three risk categories:

1. Data leakage and IP exposure

- Employees routinely share customer data, source code, financial models, and legal documents with AI tools to get faster outputs.

- Most consumer-grade AI tools store user inputs. Most use them for model training.

- The organization loses control of that data the moment it leaves the corporate perimeter, and often there's no record that it ever did.

2. Compliance violations

- The GDPR, HIPAA, SOC 2, the PCI DSS, and the CCPA all impose strict requirements on how personal and sensitive data is handled.

- Shadow AI tools are typically not evaluated for compliance, and they may process data in ways that violate those requirements.

- The organization remains accountable even though the tool was never officially sanctioned.

3. An expanded attack surface

- Malicious actors are aware that AI platforms attract sensitive enterprise data.

- Compromised AI vendors, prompt injection attacks, and manipulator-in-the-middle scenarios are all documented threat vectors.

- Every unsanctioned AI tool is an unmanaged element of the organization's attack surface, with security teams unable to defend what they cannot see.

Real-world shadow AI examples

The Samsung incident in 2023 is a widely cited case for shadow AI risks. Engineers at Samsung's semiconductor division used ChatGPT to assist with code debugging and meeting notes. In doing so, they uploaded proprietary source code and confidential internal data to OpenAI's servers. Samsung responded by restricting every employee's ChatGPT prompt to 1,024 bytes. This incident shows how quickly shadow AI exposure can escalate and how reactive measures do nothing to recover the data that has already left the organization.

The Samsung case is notable not because it was outrageous but because it was visible. Most shadow AI exposure goes unreported. A lawyer summarizing case notes in a chatbot, a financial analyst feeding earnings projections into an AI model, or a recruiter pasting candidate profiles into a content generator—these happen constantly, quietly, and without incident reports.

Shadow AI data exposure by team

| Team | Data typically fed to AI | Primary risks |

|---|---|---|

| Development | Source code, API keys, internal architecture documents, and bug reports | IP theft, credential exposure, and codebase leakage |

| Legal | Contracts, case notes, litigation strategies, and compliance documents | Privilege breaches and confidentiality violations |

| HR | Candidate profiles, performance reviews, salary data, and employees' PII | GDPR or CCPA violations and PII exposure |

| Marketing | Campaign strategies, customer lists, and unreleased product information | Exposure of marketing plans to competitors and customer data leakage |

| Leadership | Board presentations, strategic plans, and investor communications | High-value IP leakage and insider information risks |

How to detect shadow AI in your environment

Because shadow AI blends into normal web traffic, SaaS usage, and browser activity, detecting it is often a challenge without a proper cloud application discovery tool . This means continuously scanning network traffic to identify which AI platforms employees are accessing, how frequently, and through what accounts. Consumer AI tools have recognizable traffic signatures and domain patterns. A discovery tool that categorizes cloud app usage can surface unauthorized AI platforms that would otherwise be invisible.

Once shadow AI is discovered, look for these signs to further narrow it down:

- Employees accessing well-known AI platforms through personal accounts

- Unusual volumes of data being uploaded to AI-associated domains

- Browser extension activity from AI writing or summarization tools

- API calls to large language model endpoints from endpoints that have no sanctioned AI workflows

- Spikes in outbound traffic to AI platforms around sensitive project deadlines

The goal of detection is not to build a list of violators and penalize them. It is to build an accurate picture of how AI is actually being used so that better governance decisions can be made, grounded in real behavior rather than assumptions.

How to manage shadow AI risks across enterprise tools

Shadow AI risks vary across enterprise platforms based on which GenAI tool accesses, processes, and generates data.

Managing shadow AI in Microsoft 365

The core risk with ChatGPT-like capabilities in Microsoft 365 (via Copilot) is how broadly AI can access and surface internal data. Copilot does not rely only on what a user types. It operates over emails, documents, chats, and meetings, meaning:

- Sensitive data may be pulled from all accessible files across the organization, leading to the exposure of information that users did not explicitly request but had indirect access to.

- Data shared in one context (e.g., Teams) may reappear in another (e.g., Word or Outlook).

From a risk standpoint, this is a data visibility expansion problem, not just a prompt issue. Managing this requires:

- Limiting what data AI can access across Microsoft 365 services.

- Ensuring sensitive content is not included in AI-generated outputs.

- Monitoring how AI features surface and recombine organizational data.

Managing shadow AI in Google Workspace

The primary concern with Google Gemini in enterprise environments is how easily sensitive data can be included in AI interactions during everyday work. Gemini is embedded directly into Docs, Sheets, and Gmail, which means:

- Sensitive information already present in documents or emails may be sent to AI through prompts.

- Users may not be able to distinguish between editing content and sharing it with an AI system.

- Real-time assistance reduces friction, increasing the likelihood of unintentional data exposure.

This creates a context-driven data leakage risk where the issue is not what AI retrieves but what users include without realizing it. Managing this requires:

- Restricting AI usage in documents and emails containing sensitive data.

- Controlling where Gemini features are enabled across Workspace.

- Increasing visibility into how and when users invoke AI within active workflows.

Managing shadow AI in GitHub Copilot

The key data privacy concern with GitHub Copilot is how proprietary code is exposed and how generated code is introduced back into systems. GitHub Copilot operates inside development environments, where:

- Prompts may contain confidential business logic or internal implementation details.

- Generated code may reflect patterns from training data or existing codebases.

- Outputs can be directly used in production, increasing the impact of errors or insecure code.

This causes data exposure risks through prompts and code integrity risks through AI-generated outputs. Managing this requires:

- Limiting GitHub Copilot usage in sensitive or proprietary repositories.

- Treating AI-generated code as untrusted until reviewed.

- Ensuring generated outputs meet security and quality standards before deployment.

How to build a shadow AI governance framework

A governance framework is not a ban. Bans do not work. What works is a structured approach that channels the employee demand for AI tools toward sanctioned, secure options while maintaining visibility over the rest. Here's a quick checklist to get you started:

- Start with an AI inventory: Identify every AI tool currently in use across your organization, including those embedded in approved SaaS platforms. This is your baseline. You cannot make policy decisions without knowing what you are governing.

- Define an acceptable use policy: Document which AI tools are approved, what data can be entered as prompts, what files can be uploaded, and what constitutes a violation. Make the policy accessible and specific.

- Implement technical controls: Every policy decision needs a corresponding technical control. Work with your security team to identify and prioritize controls based on your highest-risk exposure points. Controls include:

- Category-based blocking to restrict access to high-risk AI platforms.

- Web filtering to prevent access to known malicious or unapproved AI domains.

- Login controls to block employees from accessing AI platforms through personal accounts on corporate devices.

- Establish a sanctioned AI pathway: Cut through the red tape and give employees a fast, accessible route to approved AI tools. Shadow AI thrives when the official process is slow or unavailable. If employees can get an AI tool approved within a reasonable timeframe, most will use that route.

- Monitor AI usage continuously: AI tool usage evolves constantly. New platforms emerge, existing tools add new features, and employee behavior shifts. Governance is not a one-time exercise. It requires continuous visibility and regular policy reviews.

Manage shadow AI with DataSecurity Plus

Addressing shadow AI is fundamentally a visibility and control problem. ManageEngine DataSecurity Plus gives security teams both. With DataSecurity Plus, you can:

Monitor GenAI prompt activity

Track what employees are entering into AI tools across your organization and gain visibility into prompt activity across sanctioned and unsanctioned platforms.

Discover shadow AI tools

Discover shadow cloud applications across your organization, including shadow AI platforms, to get a clear view of which AI tools are being used, by whom, and how frequently.

Block unauthorized AI platforms

Block employee access at the URL level to high-risk or unapproved AI platforms and browser-based AI tools that require no installation.

Block entire AI app categories

Apply category-based blocking to entire AI app categories like chatbots, AI writing tools, and code assistants—without having to maintain manual blocklists. As new AI platforms emerge, category-based controls extend automatically.

Restrict personal account access

Prevent employees from accessing authorized AI platforms through personal accounts on corporate devices so that AI usage flows through monitored, enterprise-managed accounts.

Download a free, 30-day trialFrequently asked questions

What is shadow AI?

Shadow AI refers to the use of AI tools, like GenAI chatbots, code assistants, and AI-integrated SaaS platforms, by employees without IT or security team approval. These tools operate outside enterprise controls, creating data security, compliance, and governance risks of which organizations may be entirely unaware.

How is shadow AI different from shadow IT?

Shadow IT is the broader term referring to any technology used without IT team approval. Shadow AI is a more specific, riskier subset of shadow IT. With shadow IT, the concern is mostly about what tools exist and who controls them. With shadow AI, the concern extends to what happens to the data inside those tools, what the tools do with it, and what decisions get made based on their output. Shadow AI is a harder problem to govern because the exposure is less visible and harder to reverse.

Why is shadow AI a compliance risk?

Most consumer-grade AI tools were not designed with enterprise compliance in mind. They may process data in ways that violate data residency requirements, lack audit trails that regulators require, and retain data beyond what data protection laws permit.

How do I detect shadow AI in my organization?

Start with cloud application discovery. Continuously scan network traffic to identify which AI platforms employees are accessing and through what accounts. Supplement this with monitoring for unusual outbound data volumes to AI-associated domains, personal account logins to known AI platforms on corporate devices, and browser extension activity from AI tools.

What data is most at risk from shadow AI?

Most shadow-AI-related breaches expose PII and IP. Source code, customer data, financial models, legal documents, and HR records are among the most commonly exposed data types.