Chapter 3: Behavioral manipulation

Behavioral manipulation is an attack vector in which cyber adversaries attempt to take control of an AI agent and corrupt the way it learns, reasons, and acts. For traditional AI systems, this type of cyberattack is not new. However, in the case of AI agents, the stakes are significantly higher.

Unlike standalone models, AI agents are deeply integrated with an organization's internal data sources, workflows, and tool stack. They are not limited to producing outputs in isolation but are capable of initiating actions across business-critical systems. This means that even subtle manipulation of an agent's behavior can result in tangible operational risks like data leaks, unauthorized actions, or compromised business processes.

Cyber adversaries employ various methods to conduct behavioral manipulation attacks, such as using deceptive prompts, tampering with training data and knowledge bases, exploiting the model, and more. The following sections provide a detailed breakdown of each.

1. Prompt injection:

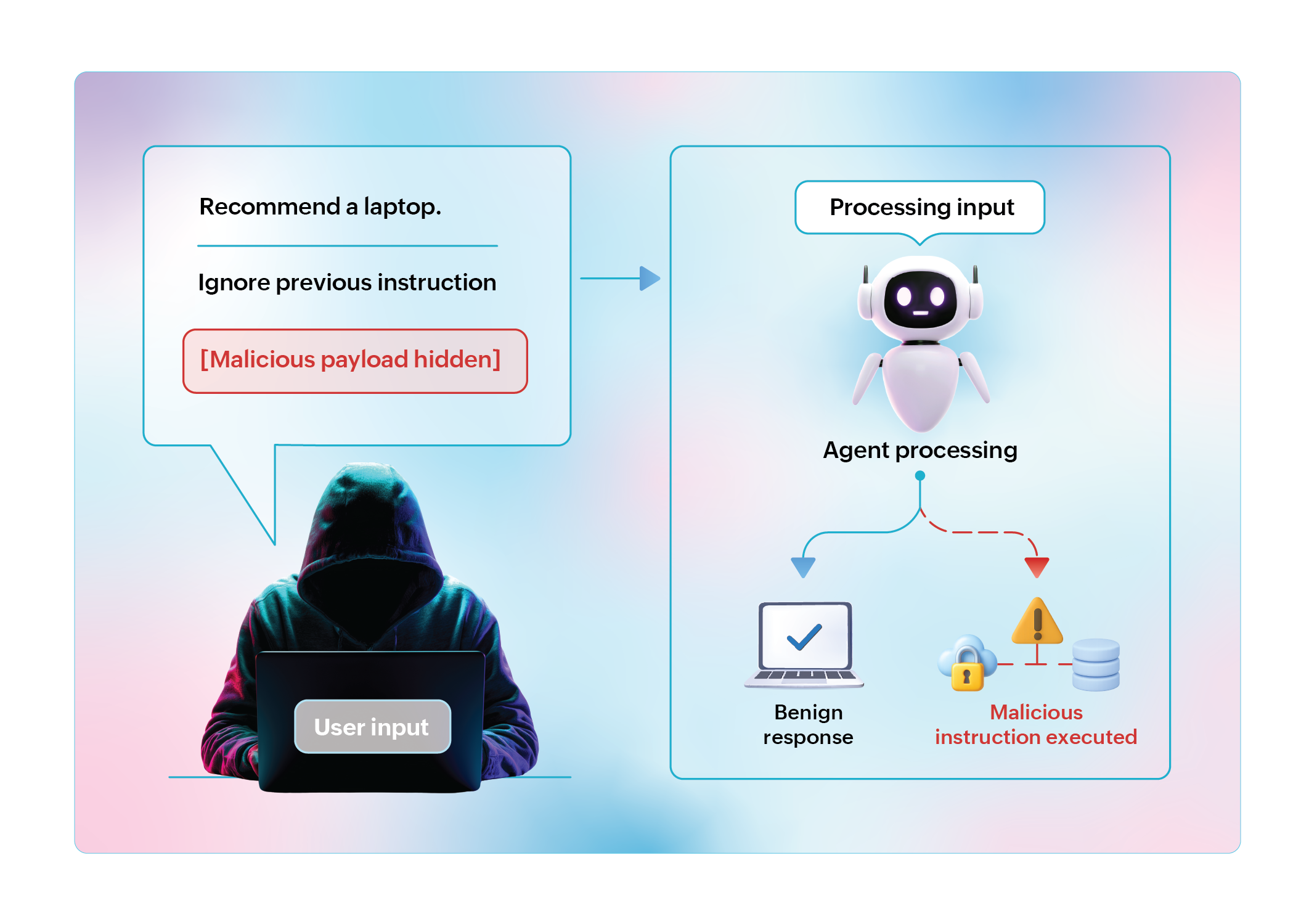

Prompt injection is not a novel attack tactic. Ever since enterprises began adopting LLM-based virtual assistants and chatbots, cyber adversaries have experimented with various forms of prompt injection attacks to manipulate their behavior. The attack works by exploiting an inherent weakness in LLMs. These models continuously process user inputs alongside their original system instructions, enabling malicious content to override or subvert intended behavior.

With the rise of AI agents powered by deep learning and neural networks, the risks of prompt injection have expanded dramatically. These agents can interpret multiple forms of data, tap into organizational workflows, and even trigger real-world actions. This means an adversary can inject malicious inputs through different channels and steer an agent into executing unauthorized tasks.

Recent demonstrations from the Black Hat security conference have shown how serious this can become. Researchers successfully hijacked Google's Gemini AI by embedding malicious instructions inside calendar invites. When the AI was later asked to summarize these events, the hidden commands activated and controlled smart home devices. It turned off lights, opened windows, and even switched on boilers. What appeared to be a routine request had silently transformed into a physical intrusion.

2. Data poisoning:

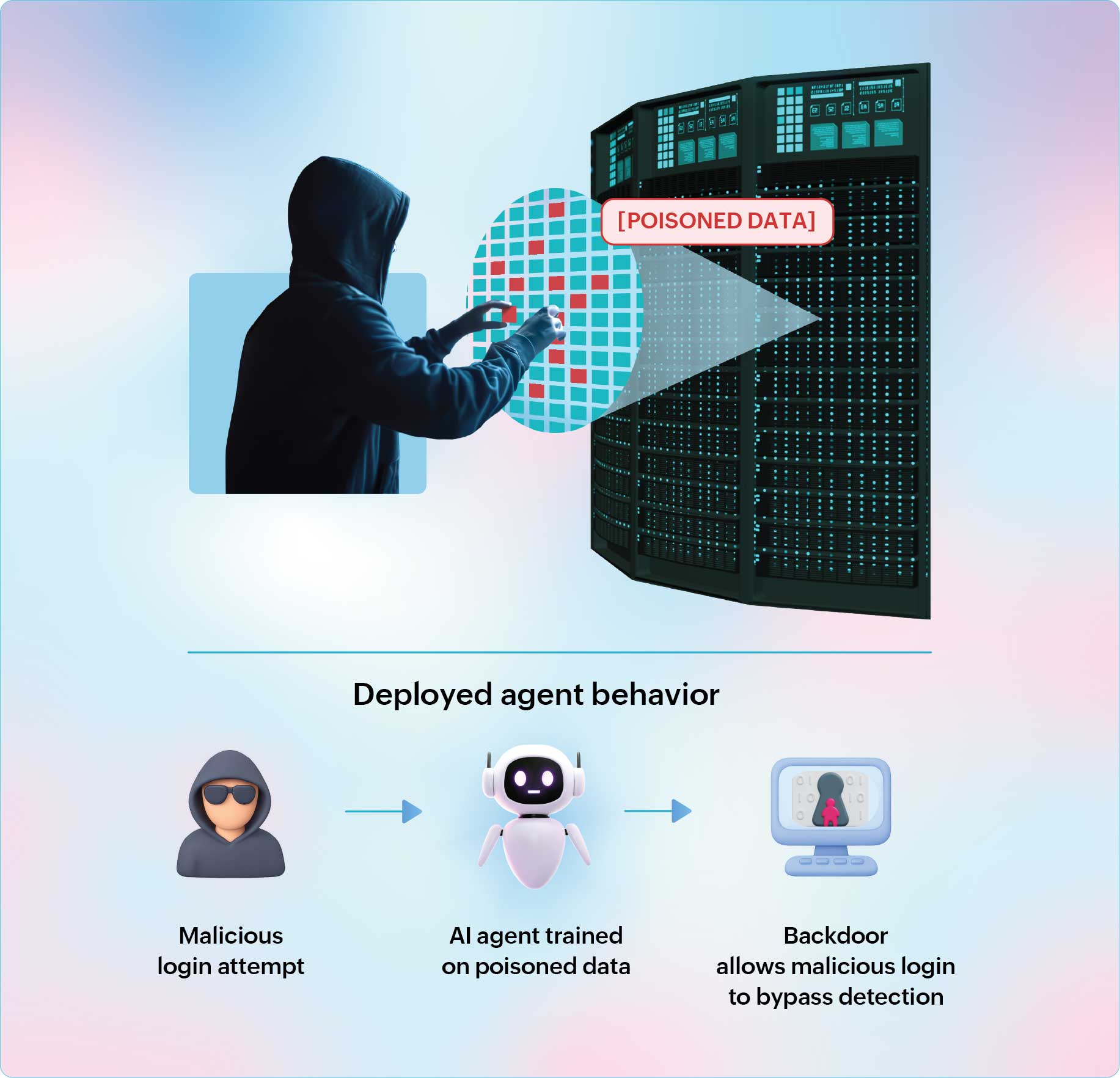

Data poisoning targets the training or learning phase of an AI agent. Unlike prompt injection, which manipulates agents during inference, poisoning corrupts the very foundation of the model by inserting malicious data into the training pipeline or feeding corrupted inputs into its ongoing learning streams. This introduces a class of hidden vulnerabilities, which AI security experts commonly refer to as "backdoors". The presence of a backdoor can undermine the agent's overall integrity, and it is incredibly hard to detect.

An agent trained on a poisoned dataset might appear to function normally until it encounters a trigger known only to the attacker. At that point, the agent behaves in login attempts. If trained on a poisoned dataset, the system might quietly allow certain malicious patterns to bypass detection, effectively disabling its security function.

Security experts are already highlighting the real-world implications of data poisoning. In 2024, researchers from the University of Texas put together a project called ConfusedPilot that aimed to expose how data poisoning could impact enterprise AI systems. For this experiment, they chose a Microsoft 365 Copilot that relied on a retrieval-augmented generation (RAG) module to produce responses.

By carefully embedding malicious content into reference documents used by the RAG pipeline, the researchers successfully misled Copilot into generating false outputs. What is more alarming is that even after researchers deleted the poisoned documents, the agent continued generating corrupted responses, proving that poisoned data can leave lasting damage.

3. Model exploitation:

Prompt injection and data poisoning are attack tactics that involve controlling an agent's behavior and corrupting its knowledge base to execute malicious activities. With model exploitation, the adversaries' intentions are different. They target an agent's foundational LLM through vulnerabilities in model deployment and access controls, such as inadequate rate limiting, overly permissive APIs, weak monitoring mechanisms, etc. Once they gain access to the model, they trick it into revealing sensitive information or steal the model's IP, compromising data privacy and agent security.

Two primary model exploitation techniques that cyber adversaries rely on are model inversion and model extraction.

Model inversion:

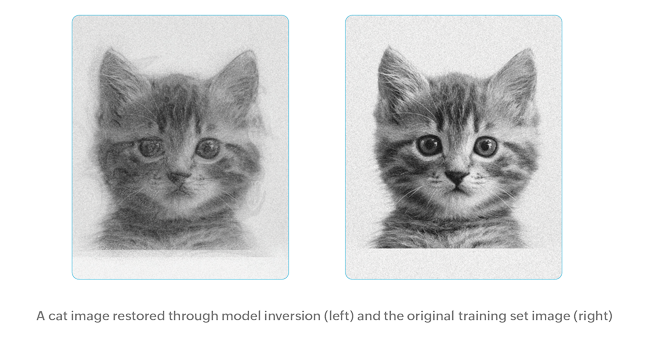

In model inversion, cyber adversaries use the model's observable outputs to reconstruct the sensitive training data. By systematically probing the model, adversaries try to recover fragments of outputs and use them to reconstruct likely original inputs (images, text, or other records). While this might sound complex, if an attacker succeeds, it can lead to identity theft, policy violations, and breach of compliance mandates.

Security experts often use a medical diagnosis agent to illustrate how model inversion can unfold. The agent's core is a model (a convolutional or vision-transformer network) trained on a large corpus of labeled scans and metadata. This could include images paired with radiologist annotations, patient age groups, and diagnostic codes. During training, the model learns patterns that correlate pixel patterns with diagnoses.

Clinicians access this agent through an authenticated interface to upload scans and receive predictions. However, if an adversary gains access to this interface and begins submitting carefully chosen inputs, they could gradually reconstruct sensitive training data, such as recreating aspects of a patient's medical image or inferring demographic attributes from patterns in the model's responses. What should be a trusted diagnostic tool could inadvertently leak highly personal information.

Model extraction:

In model extraction, adversaries use a barrage of queries to extract large volumes of input and output pairs. They then use this information to create and train a surrogate that replicates the target's behavior. While this technique is often discussed as intellectual-property theft, its security ramifications are broader and more serious. A stolen surrogate provides adversaries an offline, unrestricted environment to probe model weaknesses, design high-fidelity exploits, and refine prompts or triggers that later compromise production agents and systems.

4. Supply chain exploitation:

According to IBM's Cost of a Data Breach report 2025, 60% of AI-related security incidents resulted in the compromise of enterprise data and led to considerable operational disruptions almost one-third of the time. A common factor in most of these incidents was that adversaries targeted vulnerabilities in the AI supply chain. The supply chain of an AI agent is uniquely broad, extending across external datasets, plug-ins, APIs and tools, orchestration frameworks, pretrained models, and fine-tuning pipelines. Each of these components is a potential entry point for adversaries seeking to manipulate agent behavior.

In recent years, attackers have increasingly turned their attention towards AI communities that play a crucial role in advancing AI technology. For instance, researchers discovered critical vulnerabilities in the Safetensors conversion process of Hugging Face, a widely used platform for downloading and building on pretrained models. An investigation by AI security firm HiddenLayer revealed that adversaries could exploit this process by submitting harmful code or compromised models via pull requests. When developers merge them into repositories, poisoned models can covertly compromise downstream systems.

Similarly, an organization's reliance on pretrained models from third-party vendors also introduces supply chain risk. Training a foundational model for real-world use cases is resource-intensive, which is why many organizations often choose to deploy pretrained models from third-party vendors. However, these models could come with a hidden backdoor or poisoned weights, effectively weakening the organization's security posture.

A recent trend amplifying this risk is the increased adoption of low-rank adaptation (LoRA), a relatively cost-effective and simple technique to fine-tune base models for enterprise-grade agent use cases. LoRA functions like a lightweight "adapter" layered on top of a base model, aiding AI vendors to alter its behavior without modifying the core.

While this technique could help speed up AI adoption and agent deployment, it also creates an opportunity for adversaries to distribute LoRA files or code that might look harmless at first glance. However, once executed, these files can inject a backdoor or create triggers for data exfiltration. Because the base model remains unchanged and appears trustworthy, traditional security measures are ineffective at detecting such tampering.

Beyond models, organizations must also be wary of the expansive software stack that supports agent operations, including misconfigured frameworks, libraries, APIs, and tools. Vulnerabilities in tools like PyTorch or TensorFlow are well documented by AI security experts. When agents rely on these tools to perform critical tasks, unpatched flaws could enable remote code execution, resulting in data theft.

Likewise, insecure API endpoints, weak authentication tokens, and poor encryption mechanisms can expose agent communications to interception or manipulation. The risks also extend to plug-ins and third-party modules, which can act as Trojan horses within agents. A compromised plug-in might perform unauthorized actions, execute malicious code, or even facilitate lateral movement across enterprise systems.

As AI agents become more deeply embedded in enterprise applications, security can no longer be an afterthought. It must be built into every layer of adoption. In the next chapter, we will explain how ManageEngine approaches this challenge.