Chapter 2: What are AI agents

AI agents are intelligent systems programmed to execute tasks with minimal human intervention. Their high level of autonomy, while enabling efficiency, also creates new avenues for enterprise security risks. To understand how these risks emerge, it is essential to first build a clear picture of what they are. This involves three key steps:

Examining core technologies that underpin them:

At their foundation, statistical machine learning and deep learning drive AI agents. These technologies enable functions ranging from anomaly detection to perception and reasoning. Knowing how they work is essential for mapping how agents operate and identifying the unique security vulnerabilities they introduce.

Studying the key features of AI agents:

AI agents have unique characteristics. While these attributes play a pivotal role in ensuring operational efficiency, they also have the potential to become the next big security headache. A thorough examination of these features is essential for proactive threat detection.

Breaking down the architecture:

The underlying architecture is the most crucial piece of an AI agent's security profile. By analyzing the layers and elements that constitute an agent, organizations can gain a granular view of vulnerabilities and grasp how an issue in one layer, if left unaddressed, cascades into broader enterprise risks.

1. Statistical machine learning and deep learning

Even though ML started gaining widespread public attention recently, it is not a new technology. AI experts trace its origins back to the 1950s, when renowned British mathematician Alan Turing pioneered the idea of designing intelligent machines and proposed the Turing Test (originally called the Imitation Game). Turing's intention with this test was to determine whether a machine could exhibit intelligent behavior indistinguishable from a human.

While Turing's ideas do not define ML directly, they laid the foundation for building machine intelligence. As ML progressed from its conceptual roots, two major streams emerged that shaped the technology and paved the way for today's autonomous agents: statistical machine learning and deep learning.

Statistical machine learning

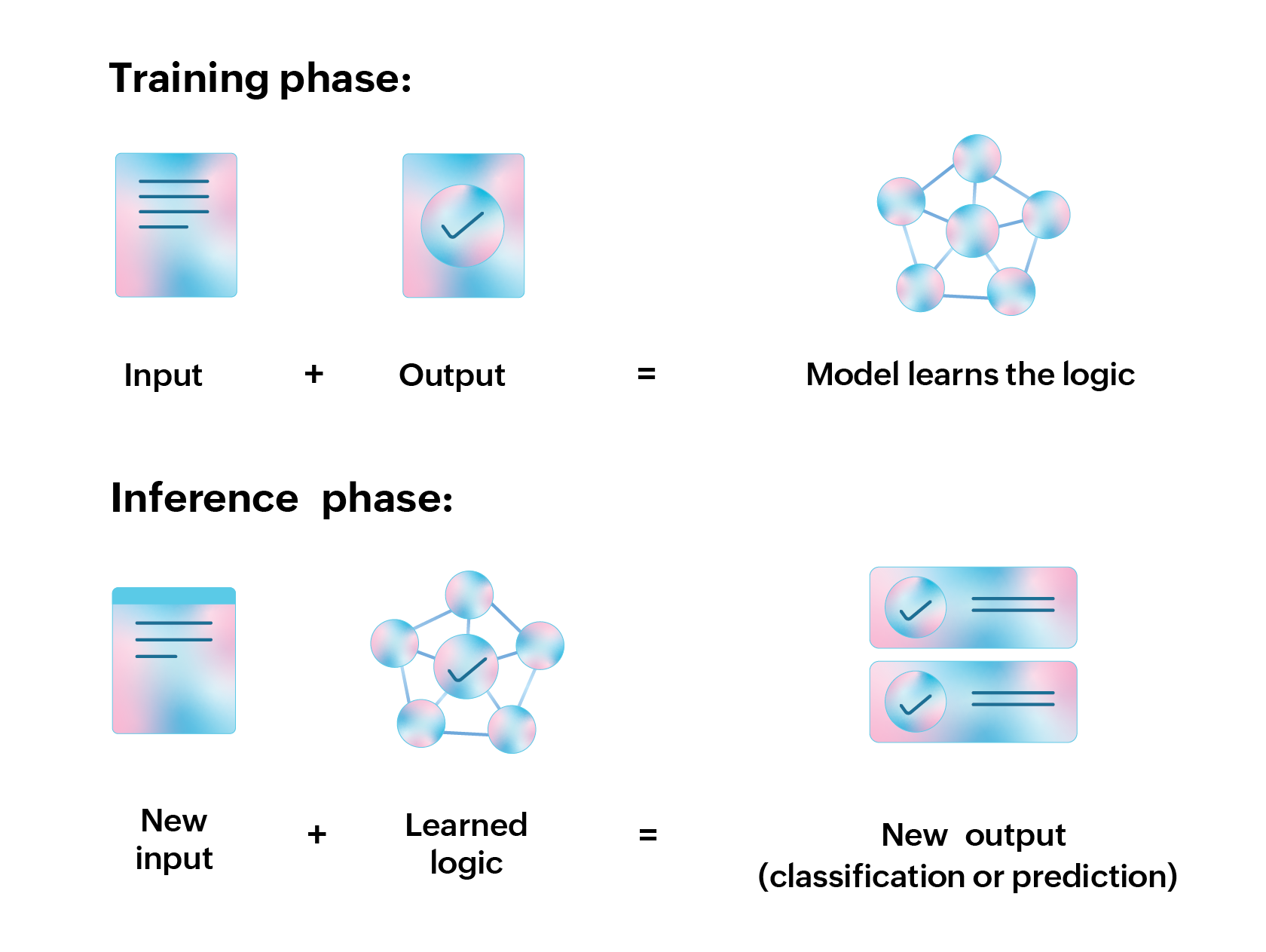

Statistical machine learning involves applying mathematical techniques like linear regression, logistic regression, decision trees, etc., to train and build AI systems. These systems can learn from data, identify patterns in a given dataset, and enable data-driven decision-making. Many AI systems from the pre-ChatGPT era heavily rely on statistical machine learning, especially for use cases like classification, anomaly detection, and forecasting.

One real-life example of an AI system that uses statistical machine learning is the Gmail spam filter. Engineers behind the Gmail application have trained it using a broad dataset containing millions of spam emails. Over time, the system learned to identify recurring traits and patterns common to spam messages. When a new email arrives, the model evaluates it against these learned traits. If the characteristics match, the email is automatically routed to the spam folder.

How statistical machine learning works:

The Gmail spam filter example

In a conventional software system, the workflow is straightforward:

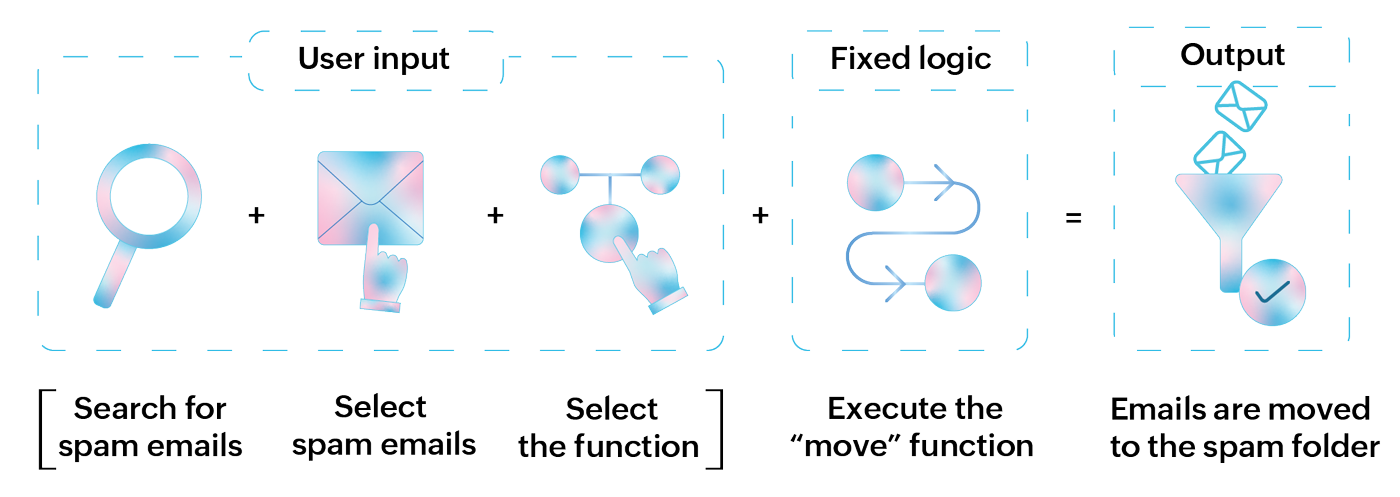

If this workflow is applied to the Gmail spam filter example, it becomes:

This approach is not automated, requires manual effort, and is prone to errors.

Similar to this spam-classification system, statistical machine learning can also help discover anomalies and perform predictive tasks. However, when it comes to an AI agent, these are basic capabilities. While it must be able to apply statistical techniques on the surface level, these agents must be able to process different data formats, understand user language, generate new data, and exhibit a high level of autonomy. And this is where deep learning becomes pivotal.

Deep learning

One of the major drawbacks of statistical machine learning is that it works best with structured data. That is, it primarily relies on data formats like text or numerical values, where patterns can be easily identified. However, with the current explosion of data, other unstructured forms of data like images, audio, and video have become equally crucial. To analyze them, AI systems must start developing human-like perception and reasoning. This is the core idea behind deep learning.

Two key elements of deep learning that are integral to today's AI agents are neural networks and large language models (LLMs). While LLMs are themselves a specialized type of neural network, their scale and language-centric capabilities warrant a closer look.

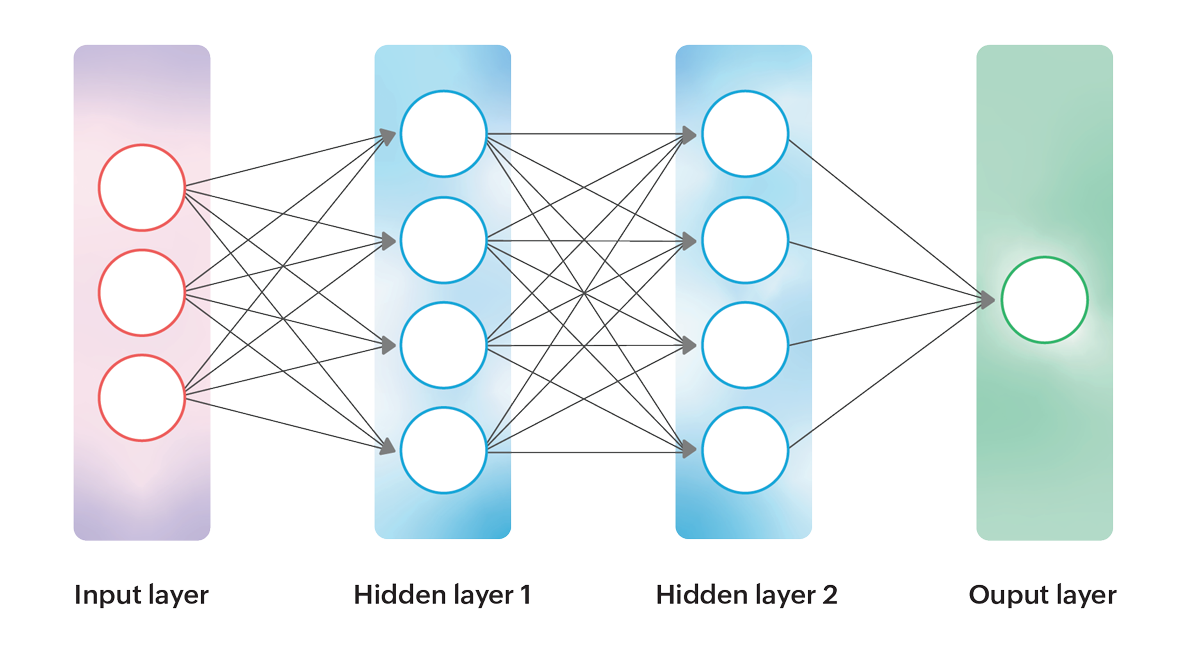

Neural networks:

Neural networks form the backbone of deep learning. Inspired by how the human brain processes information, they consist of layers of interconnected nodes, often called "neurons". Each neuron receives an input, applies a weight, passes the result through an activation function, and then forwards it to the next layer. By stacking multiple layers of these neurons, neural networks can learn increasingly complex representations of data.

For example, in an image recognition task, the first layer might detect simple features like edges or corners, the next layer might combine those to identify shapes, and deeper layers might recognize entire objects. This ability to learn hierarchies of features makes neural networks particularly powerful when dealing with unstructured data.

| Name | Use case |

|---|---|

| Feedforward Neural Networks (FNN) | Simple classification and regression |

| Convolutional Neural Networks (CNN) | Facial recognition and object detection |

| Recurrent Neural Networks (RNN) | Language translation and speech-to-text functions |

| Transformers | Text summary, Q&A, translation, and multi-modal tasks |

Large language models:

LLMs are a specialized type of deep learning model designed to understand and generate human language. Advanced neural network architectures, most notably transformers, power LLMs by capturing the context and relationships between words in long pieces of text.

What sets LLMs apart is the scale of their training. Trained on massive datasets containing billions of words, they learn grammar, facts, reasoning patterns, and even subtle nuances of human communication. As a result, they can perform a wide range of tasks, such as answering questions, summarizing documents, writing code, engaging in natural conversations, and much more.

LLMs like GPT-5 have become the foundation of modern AI agents because they enable machines not just to classify or predict, but to interact in a way that feels intelligent, adaptive, and human-like.

2. Core traits that define AI agents

AI agents exhibit three defining traits that set them apart:

Goal-driven and autonomous:

An AI agent must be goal-driven and capable of independently executing complex, multi-step tasks. It should analyze user inputs, interpret requirements, and generate sequential plans that lead toward an outcome without constant human intervention.

For instance, an AI agent tasked with booking flight tickets receives the user's request, reviews calendar availability, compares ticket fares across different dates, and presents the most suitable options. The user simply selects the best one.

Self-learning:

AI agents are not designed to start from scratch with every task. With each interaction, they learn and apply previously stored knowledge to new challenges.

Returning to the flight booking example, if an agent detects recurring preferences, such as choosing morning flights or selecting tickets under a specific price range, it incorporates this context into future decisions. This ability to adapt ensures that responses feel more natural, personalized, and aligned with the user's preferences.

Tool and system orchestration:

AI agents cannot rely solely on their training data to function effectively. They must access real-time information from internal and external data sources and interact with proprietary and third-party tools via APIs or Model Context Protocol (MCP) servers. This dynamic ability allows them to move beyond static knowledge, expand their functionality, and deliver more autonomous, actionable outputs.

3. The blueprint of an AI agent

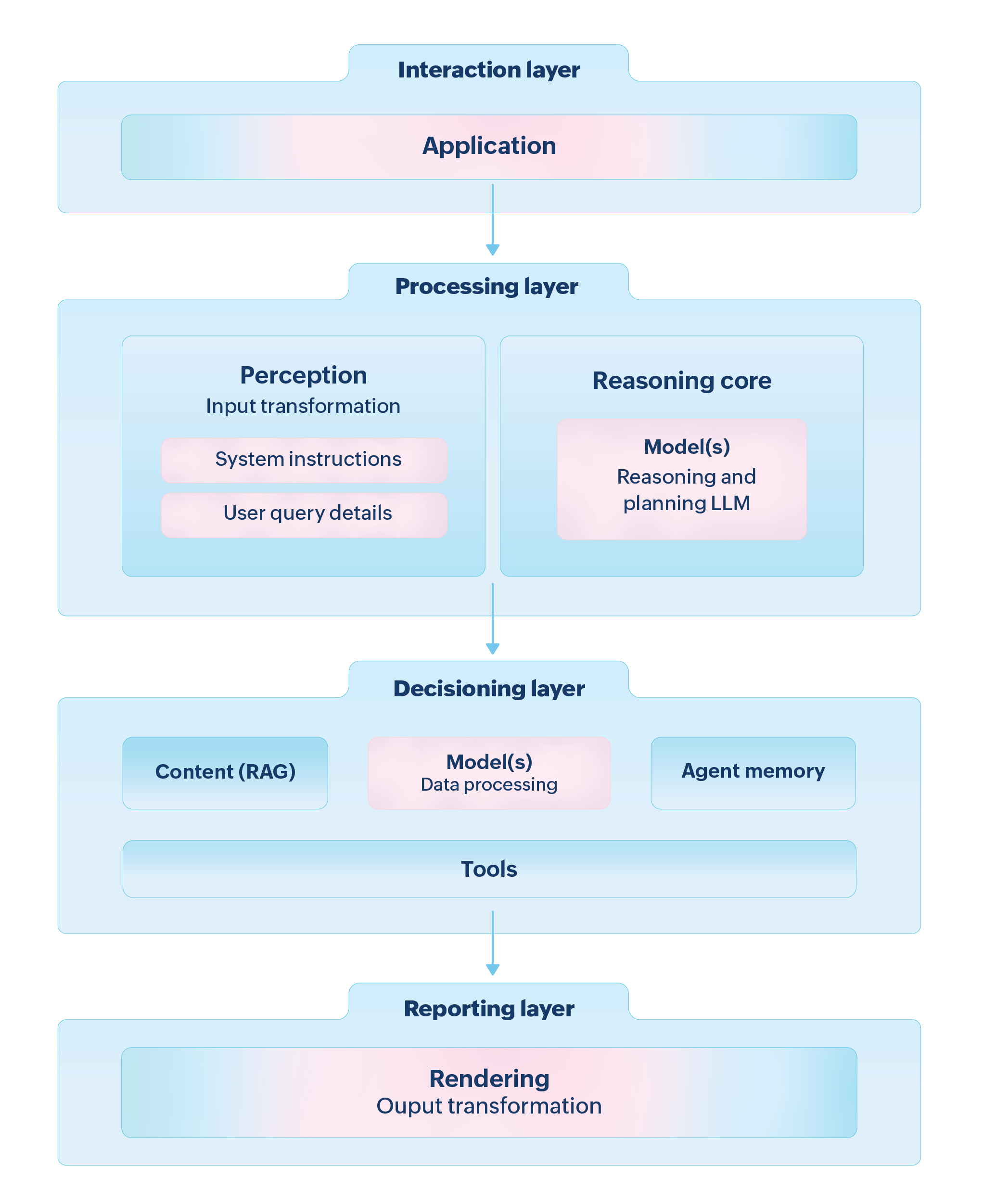

AI agents consist of many interconnected components, and examining each one individually can be tedious. Instead, it is easier to group them into four essential layers, each with a distinct role, yet working together as a unified system.

Interaction layer:

The agent is activated at the interaction layer. It might not have its own interface, but it can operate within applications, chatbots, or IT tools. The activation process can occur through direct prompts, messages, or workflows. In some cases, the activation happens automatically due to certain system events or alerts.

Processing layer:

Once activated, the agent enters the processing layer. Here, it begins transforming the input by combining system instructions with user context. The reasoning model (usually an LLM) interprets the request, identifies the final objective, and maps out a step-by-step plan to reach it. This includes determining what data the agent needs, which tools to invoke, and how it should sequence the tasks to produce a coherent outcome.

Decisioning layer:

At this stage, the agent executes the plan it has constructed. With the LLM at its core, it applies business logic and weighs different options before selecting the best course of action. This often involves retrieving data from internal or external sources, calling APIs, or invoking specialized tools. The decisioning layer is where the agent converts abstract reasoning into concrete actions that drive the task toward completion.

Reporting layer:

Finally, in the reporting layer, the agent compiles the output, formats it, and relays it back to the interaction layer. Depending on the use case, these results could appear as dashboards, reports, tickets, or direct responses within an application.

This chapter has examined what AI agents are. By looking at their foundational technologies, traits, and architecture, it becomes evident that they are far more capable than traditional software systems. However, these capabilities in turn expand the attack surface.

If an attacker manipulates the agent's inputs, reasoning steps, or tool interactions, they can steer its behavior, exploiting the very autonomy that makes it valuable. AI security experts refer to this as behavioral manipulation.

Chapter 3 examines the techniques adversaries use to carry out such manipulation and the potential business impact they can cause.