Chapter 1: The agentic AI era

Imagine a world where AI agents autonomously run core business operations. These agents interact with customers, communicate with one another, and manage organizational processes without human intervention. Sounds too good to be true? AI experts do not think so. According to them, this is the next wave of technological evolution, and they are calling it agentic AI.

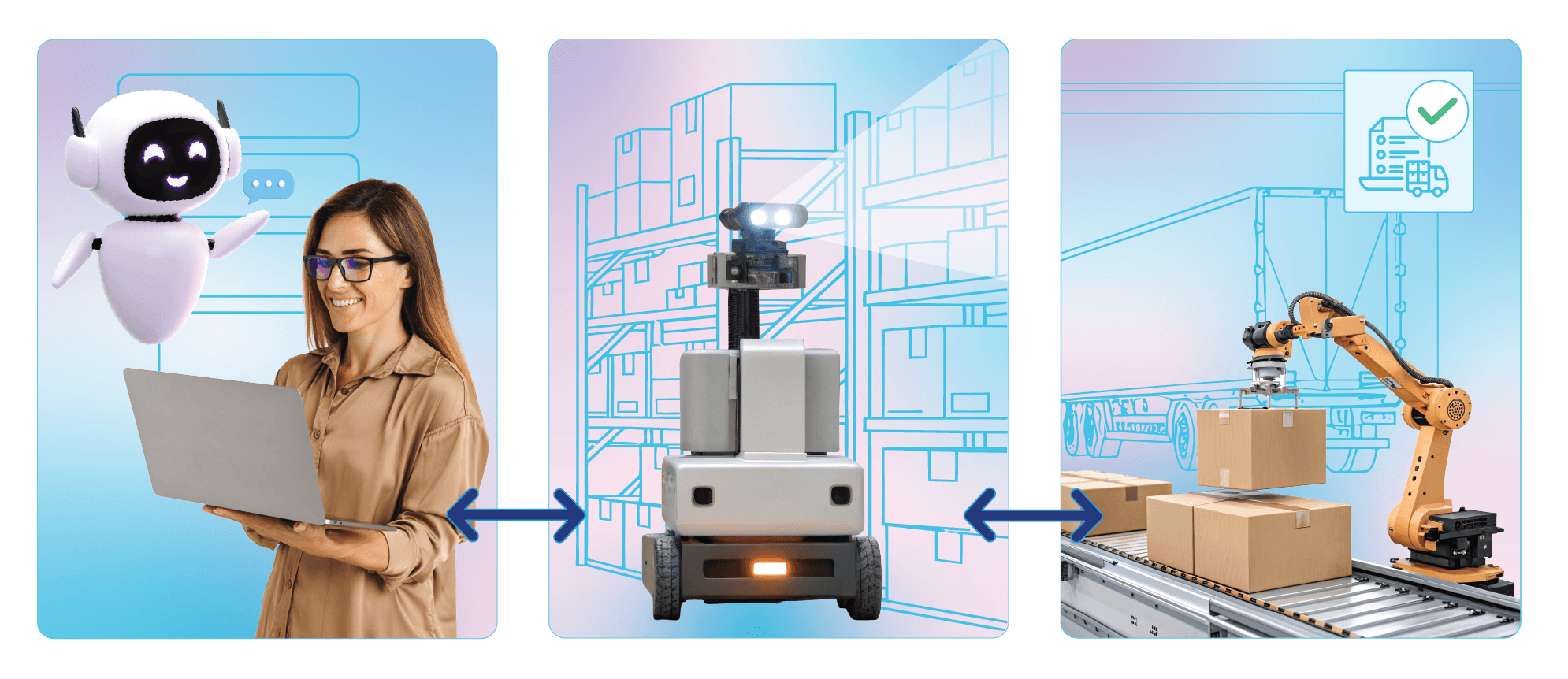

Here is what it could look like in practice. Consider an e-commerce enterprise that deploys three AI agents to streamline operations:

Agent A

A customer-facing agent that engages directly with users, understands their needs, and records their preferences.

Agent B

An operations agent that scans inventory data and verifies product availability in real time.

Agent C

A fulfillment agent that collects delivery details and coordinates with external logistics providers to complete the order.

While this sophisticated setup of AI agents operating in a coordinated manner might seem like something straight out of a sci-fi movie, it is slowly becoming a tangible reality today. Global organizations have already begun deploying AI agents to automate everyday tasks. Companies will soon integrate them into efficient agentic workflows that can handle complex business operations.

IT leaders are now facing a crucial question as the agentic AI era gradually unfolds—is their enterprise ready for it? The high level of autonomy that these AI agents possess can also introduce new operational risks and security vulnerabilities. Conventional tools and techniques alone cannot safeguard against these threats. Instead, organizations must embrace a new security approach, one that directly addresses the risks AI agents bring into modern enterprises.

This e-book explores that approach. Developed in close collaboration with the ZLabs team, our in-house AI research and development wing, and our IT observability and ITSM teams, it outlines what AI agents are, the behavioral manipulation threats they introduce, and the security guardrails we are currently developing at ManageEngine to address them.

What's ahead

- A foundational overview of AI agents: We begin with a clear explanation of what AI agents are, focusing on the machine learning (ML) concepts behind them and their core architecture. We will also examine the defining traits of AI agents, such as autonomy, self-learning, and orchestration, and how these distinguish agents from traditional enterprise software.

- An in-depth analysis of the security challenges they introduce: Central to this discussion is behavioral manipulation, which is the most pressing threat vector when it comes to AI agents. Unlike traditional attacks that exploit software vulnerabilities, these tactics target the very logic and reasoning of AI agents. We will dissect techniques such as prompt injection, data poisoning, model exploitation, and supply chain exploitation, explaining how adversaries use them and why they pose unprecedented risks to enterprise security.

- ManageEngine's approach to agentic AI security: Finally, we will provide a practitioner's perspective on securing the adoption of AI agents. This covers the enterprise-wide security practices we are designing around data governance, identity and access management, and observability. By presenting a security roadmap that centers on these three pillars, we aim to equip IT leaders with actionable insights on deploying AI agents while preserving performance, privacy, and compliance.

List of abbreviations

This e-book takes a closer look at the behavioral manipulation threats posed by agentic AI systems. Given the technical depth of the subject, readers may encounter several acronyms throughout the text. Explaining each one in-line could disrupt the flow and shift focus away from the core discussion. To keep the reading experience smooth, we have compiled a list of commonly used abbreviations for quick reference.

| Term | Abbreviation |

|---|---|

| ABAC | Attribute-based access control |

| BYOK | "Bring your own key" model |

| CNN | Convolutional neural networks (A core component of AI models that are used for facial recognition or object detection) |

| DPoP | Demonstrating proof-of-possession (An OAuth 2.0 security enhancement that binds token usage with a cryptographic key controlled by the client) |

| FNN | Feedforward neural networks (AI models for simple classification and regressions operated with feedforward neural networks) |

| LoRA | Low-rank adaptation (Aids AI vendors with altering behavior without modifying the core) |

| MCP | Model context protocol |

| PKCE | Proof key for code exchange (An OAuth 2.0 enhancement to protect public clients from code interception attacks) |

| RAG | Retrieval-augmented generation |

| RBAC | Role-based access control |

| RNN | Recurrent neural networks (AI models for language translation and speech-to-text rely on RNN) |

| SPIFFE | Secure production identity framework for everyone (An open-source project from Cloud Native Computing Foundation that defines identity standards for workloads) |