Chapter 4:

The ManageEngine approach

Agentic AI will only be as trustworthy as the guardrails we build around it. At Zoho and ManageEngine, we design and continually refine our agents with a simple principle: autonomy must never come at the cost of security. That means treating behavior-level monitoring, auditability, and strict data minimization as core design considerations — not afterthoughts. As agents grow more capable, the real innovation will be in preventing subtle behavioral manipulation, not just detecting it.

At ManageEngine, we take a pragmatic approach to creating software products. Instead of depending on external vendors for infrastructure or technical support, we prefer to build and own the technology stack. In the past, this philosophy has led us to design our own data centers, deploy a private cloud, and even source the hardware that powers them.

With AI, our approach has been no different. While some industry observers may perceive us as slow adopters, the reality is more nuanced. We have been carefully observing advancements in AI and steadily integrating them into our product suite in a thoughtful and purpose-driven manner. Before expanding into AI agents and agentic AI, we wanted to fully understand their security implications and determine how to counter them, all while staying true to our core tenets.

Many ManageEngine products today use a bring your own key (BYOK) model to integrate with third-party LLMs without exposing customer data to external providers. However, as the scale and complexity of enterprise use cases continue to grow, especially in scenarios that demand higher levels of autonomy and tighter orchestration with internal and external systems, we knew we needed complete ownership of the foundational technology stack to deliver value without compromising data security and privacy.

While this approach of building from the ground up is undeniably more resource-intensive, we firmly believe that the long-term benefits outweigh the trade-offs. That belief is now paying off. We have released our proprietary Zia LLM, built specifically for enterprise-grade use cases and hosted entirely within our infrastructure, along with Zia AgentStudio, our no-code AI development platform with full MCP support to enable agent actions.

Now that Zia LLM and AgentStudio have laid the groundwork, we are in the early stages of applying these capabilities across our product ecosystem. We are currently developing AI agents for our IT observability and ITSM platforms, while also assessing other products to identify workflows and use cases where agents can add meaningful value.

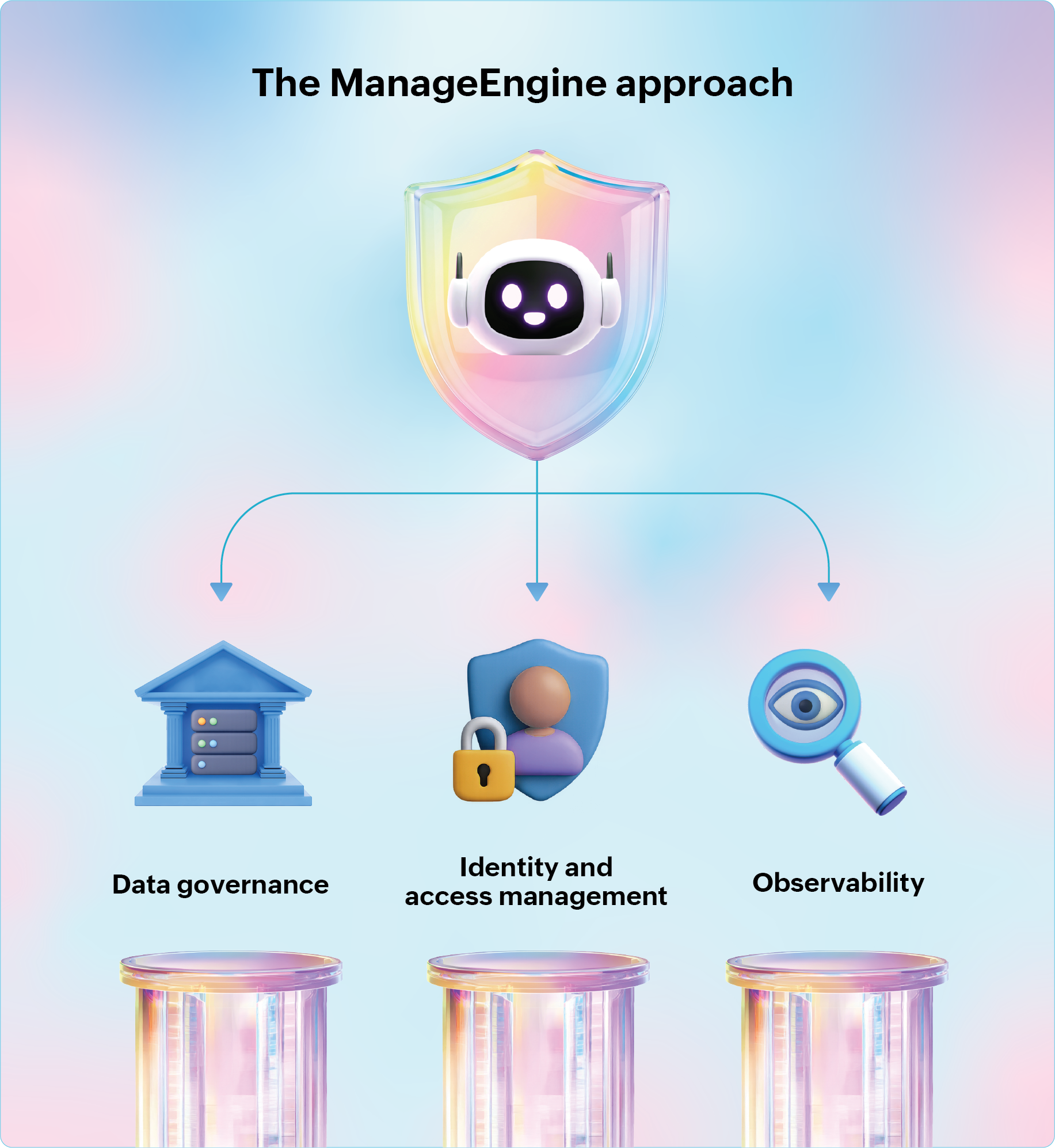

Similarly, on the security front, our AI security team is actively working to address the risks associated with behavioral manipulation. At this stage, we have identified three pillars that guide our approach: data governance, identity and access management, and observability.

These pillars shape the guardrails and best practices described in this chapter. They reflect our current direction for securing AI agents, with some practices already underway and others in early conceptual stages. As we advance real-world deployments and learn from emerging threats, these practices will continue to evolve and mature.

Data governance

The starting point for any organization looking to deploy AI systems is data governance. It is a systematic framework that guides enterprises in collecting, storing, optimizing, processing, and securing data. These steps are crucial because AI systems, especially autonomous agents, are only as effective as their training data. Scattered, inconsistent, or incomplete data can damage the agent's integrity and reliability.

From a security perspective, strong data governance becomes essential. As discussed in the data poisoning section, adversaries attempt to corrupt an agent's training or inference streams by introducing malicious datasets. Similarly, model inversion attacks can coerce an agent into leaking sensitive training data. Data governance is integral to all that we do at ManageEngine. Our existing framework has long emphasized foundational practices such as maintaining a standardized semantic layer for data formats and enforcing a single source of truth (SSOT). As we expand into agent-driven workflows, we are now strengthening this framework with additional security-focused measures.

Here are some of the security measures we are incorporating:

Using licensed or open-source datasets for training:

We primarily rely on licensed datasets from trusted providers to train our models. These curated sources help us avoid the risks associated with unverified training data and ensure that we obtain domain-specific samples suitable for our use cases.

When licensed datasets are not available, we turn to reputable open-source communities. In such cases, we conduct security and quality checks to detect any form of malicious contamination or inconsistency before the datasets enter our pipelines.

In addition to these sources, we are building mechanisms to generate synthetic data from internal metadata. This enables us to expand our training corpus safely while maintaining strong controls over data provenance and integrity.

Conducting real-time data validation:

The real-time data entering the inference pipeline is also a common attack surface for adversaries. To counter this, we are setting up automated systems to validate incoming data.

Our systems verify the structure, quality, and provenance of the data, and quarantine anything that appears skewed, corrupted, or suspicious. These controls prevent compromised inputs from influencing the agent's behavior and help preserve both the security and reliability of deployed agents.

Tracing data lineage:

AI agents are often perceived as black-box systems that deliver non-deterministic outputs, which makes investigating unexpected behaviors particularly challenging. To strengthen both transparency and security, we are introducing capabilities to trace the full lineage of data used within our training and inference pipelines.

By tracking where each input originated, how it moved through internal systems, and how it transformed before producing an output, we gain the visibility needed to detect anomalous patterns and isolate compromised data sources quickly.

This level of traceability not only aids in compliance but also provides a strong forensic foundation for analyzing and responding to security incidents involving AI agents.

Enforcing schema-level data masking:

To minimize the risk of sensitive data exposure, we are in the process of restricting an agent's visibility to the structural metadata of a dataset. The agent can access the table schemas, column headers, and field definitions, while we keep the field values fully masked and encrypted.

When an agent performs a query, our systems route the actual data to the user or downstream system without revealing it to the agent itself. This approach ensures the agent can understand only the shape of the data it is working with while preventing exposure to potentially sensitive or proprietary information.

By decoupling schema awareness from data access, our goal is to reduce the risk of model inversion attacks, inadvertent data leakage, and unauthorized learning from sensitive fields.

Identity and access management

After implementing a robust data governance framework, the next critical step for organizations deploying AI agents is identity and access management (IAM). As the enterprise IT management division of Zoho Corp., ManageEngine is no stranger to IAM. Then again, AI agents introduce complexities that conventional IAM models were not designed to handle.

Unlike human users or traditional systems, AI agents can access and share privileged data and make decisions that could have far-reaching consequences. This requires a fundamentally different approach to IAM.

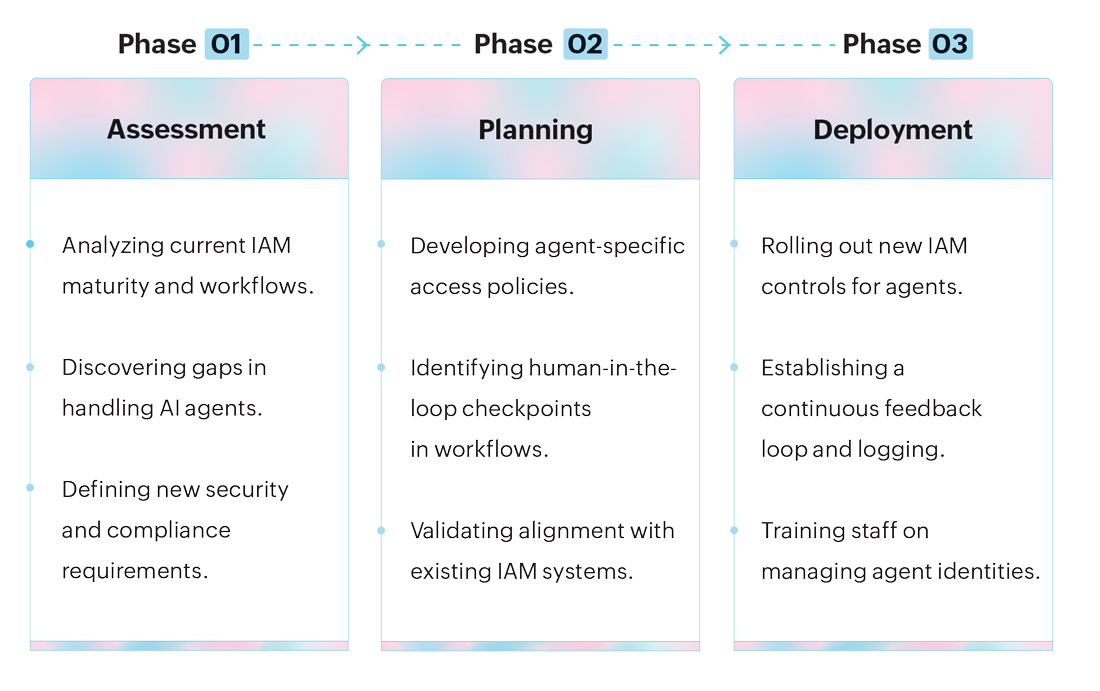

To extend our IAM framework to support AI agents, we are developing a structured three-phase plan:

Here are the key practices we are in the process of adopting as part of this three-phase plan:

Creating unique agent identities:

To support secure agent operations, we are implementing measures that ensure AI agents are never treated like human users. Each agent will receive a unique, verifiable identity with ephemeral credentials, rather than static API keys or generic service accounts. We are also incorporating controls that prevent credential reuse or sharing between agents, strengthening isolation and accountability.

Implementing life cycle management and granular categorization:

We are in the process of establishing a structured life cycle model for each of our agents. Under this model, any agent deployed internally or embedded within a product will have its entire life cycle documented, from creation to decommission.

Additionally, we are classifying agents along granular dimensions such as interaction method (API versus GUI), degree of autonomy, and ownership. This methodical classification will enable us to align and reconfigure access controls with greater precision as our agent ecosystem grows.

Integrating human-in-the-loop checkpoints:

Even though AI agents are autonomous, we believe human oversight is essential. We assign each agent a dedicated custodian responsible for periodic access reviews and recertification. Access tokens issued to agents will be time-bound and mapped back to this custodian for accountability.

Additionally, for mission-critical tasks, we are implementing "out-of-band" human authentication methods, such as push notifications or QR code approvals, to ensure explicit human validation before the agent executes high-impact actions. To operationalize these human-in-the-loop checkpoints, we are developing a "custom risk scoring logic" that categorizes every action an agent can perform based on its security impact.

For example, in a database management agent, the risk-scoring logic might classify a simple read operation as low-risk, while write or delete operations fall into the high-risk category. Whenever the agent encounters a high-risk action, it is designed to automatically pause and trigger a human authentication step before proceeding.

Extending the principle of least privilege to agents:

Following the principle of least privilege, we are granting agents only the minimum set of permissions required to perform their tasks.

To achieve this, we plan to rely on short-lived tokens, explicit human approval for high-risk operations, and just-in-time (JIT) access. While these measures might impose limits on agent functionality, they significantly reduce the attack surface for behavioral manipulation.

| Property | Human Identity | Agent Identity |

|---|---|---|

| Lifespan | Years (tied to employment) | Ephemeral (seconds to minutes, tied to a task) |

| Provisioning | Manual / HR-driven onboarding | JIT provisioning via policy |

| Authentication | Passwords, MFA, SSO, passkeys | Advanced authentication mechanisms (SPIFFE, PKCE, DPoP) |

| Access Scope | Role- or attribute-based (RBAC / ABAC) | Task-specific with delegated permissions |

| Auditing | Session-level activity logs | Delegation-chain logs with full traceability |

| Life cycle Management | Rarely revisited (deprovisioning on exit/separation) | Continuous monitoring, automated expiry and revocation |

Observability

Observability enables monitoring of the internal and external states of an AI agent to detect anomalies or deviations in its telemetry data. While robust data governance and access controls can help organizations reduce exposure to attacks like data poisoning during training and prompt injection, comprehensive observability is what enables them to catch subtler and more complex manipulation tactics like learning-stream poisoning, prompt injection, model inversion and extraction, and even certain supply chain exploits.

At ManageEngine, we already possess extensive observability capabilities for traditional deterministic software applications. However, AI agents are non-deterministic, which makes monitoring them far more challenging. As we work on expanding observability for agent-driven workflows, we have identified four fundamental questions that guides our approach: what breaks, what to track, what signals a problem, and what the debugging focus should be.

| Question | Traditional software application | AI agents |

|---|---|---|

| What breaks? | Entire application or application components | The reasoning model, decision chain, or connected tools |

| What to track? | Logs, uptime, error rates, latency | Prompts, decision paths, response quality, inference latency, API and LLM calls, failed tool calls, logs (user interaction and LLM interaction), traces, etc. |

| What indicates a problem? | Error codes, application crashes, slow responses | Model drift, rogue actions |

| What should be the debugging focus? | Inspecting deterministic logic and infrastructure vulnerabilities | Tracing reasoning and decision pathways |

Based on these insights, here are the practices we are building into our observability framework for AI agents:

Monitoring model drift for hidden tampering:

Model drift is not just a performance issue. In the context of AI agents, it can be a sign of data poisoning or subtle supply chain tampering. For example, if a threat-hunting agent that consistently flagged suspicious login attempts suddenly stops doing so, it might indicate that its learned patterns have been altered.

We are working on enhancing our observability systems to incorporate performance benchmarks and correlate them with shifts in response accuracy and behavior. This will help us detect deviations early and take corrective actions like rolling back or retraining before attackers can exploit them.

Tracking response quality as a manipulation signal:

We are updating our monitoring systems to continuously observe the quality of agent responses across sensitive use cases. A rise in hallucinations or unusual changes in tone is not dismissed as a usability flaw. Instead, we treat these deviations as potential signs of compromised data sources, adversarial prompts, or hidden backdoors. This proactive stance will enable us to intervene before such responses lead to data leakage or compliance issues.

Measuring inference latency for probing attacks:

Agents slowing down might not always be due to system load. Prolonged or abnormal inference latency can also signal model inversion attempts, where attackers submit repeated prompts to reconstruct training data. We are enhancing our latency-monitoring capabilities so that unexplained slowdowns automatically trigger an investigation into possible adversarial activity.

Examining failed tools and API calls for exploitation attempts:

Failed tools and API calls are often dismissed as integration or infrastructure problems. However, in the context of AI agents, they might indicate vulnerabilities or exploitation attempts.

For instance, a failed API call could be the result of a network overload triggered by an adversary during a data exfiltration attempt. Or, it might stem from a weak integration configuration that adversaries could later exploit for unauthorized access. We are refining our monitoring tools to isolate these incidents quickly and trigger alerts that prompt deeper security investigation and remediation.

Expanding log collection for forensic depth:

Conventional logs capture access events, authorization details, data movement, timestamps, and metadata. For AI agents, we are broadening our observability framework to include four additional categories:

- User interaction logs

- LLM interaction logs

- Tool execution logs

- Decision-making logs

By maintaining these logs, we aim to create a forensic baseline that helps correlate suspicious activity across multiple dimensions.

| Name | Description | The security angle | Example |

|---|---|---|---|

| User interaction logs | Records all queries, inputs, and interactions between users and the agent. | Helps detect repeated adversarial prompting, malicious rephrasing, or attempts to slip in harmful instructions. | A user repeatedly modifies their queries until a hidden instruction causes the agent to disclose sensitive data. |

| LLM interaction logs | Captures all exchanges between the agent and the underlying LLM. | Enables detection of malicious prompt chaining or unusual token spikes that might indicate extraction attempts. | An attacker injects hidden instructions mid-conversation, causing the agent to go rogue. |

| Tool execution logs | Tracks which external tools/APIs an agent calls, along with the commands issued and results returned. | Identifies unauthorized or abnormal tool usage and failed or repeated API calls. | An agent repeatedly attempting unauthorized API calls, suggesting an attacker has gained access. |

| Decision-making logs | Documents how the agent chose a particular path (selected actions, scoring, tool choices, etc.). | Provides forensic evidence of bias, backdoor, or rogue behavior by explaining why the agent chose a certain path. | An agent consistently prioritizing a malicious data source, indicating tampered training or poisoned instructions. |

Recording detailed traces of each request:

While logs capture and record each event in isolation, traces map the full decision journey of the agent as it processes a request. We are working on expanding our tracing mechanisms to chronologically record everything from the initial user input and LLM calls to the final output.

This level of detail will enable our security teams to not only see what happened, but also how and why it unfolded. For instance, if a compromised plug-in injects false data mid-pipeline, traces reveal the exact insertion point, the agent's reaction, and the downstream effects. These enhancements are expected to accelerate root-cause analysis and help identify security loopholes before attackers exploit them.

The future

Gartner predicts that by 2028, 33% of enterprise applications will feature agentic AI capabilities, and at least 15% of routine work decisions will be autonomously executed by AI agents. Enterprises that deploy and scale AI agents will gain a key competitive edge in the near future. Yet, it is essential to approach this with cautious optimism.

History shows that every major technological breakthrough has gone through cycles of mass adoption followed by significant drop-offs and course corrections. While organizational immaturity often plays a crucial role, the more enduring concern has always been security and privacy. Take the early days of cloud computing. Enterprises were vying to be early adopters of the public cloud, eager to tap into its vast potential.

Over time, many realized that modernizing legacy systems was more complex than initially anticipated, and the security trade-offs were far from negligible. After years of mounting costs and unaddressed vulnerabilities, businesses recalibrated, embracing hybrid and private cloud models.

Will agentic AI follow the same trajectory? Only time will tell. The explosive entry of generative AI tools and the productivity gains they deliver suggest promising potential. Yet, as this e-book has shown, today's AI agents are acutely vulnerable to behavioral manipulation.

Cyber adversaries are already weaponizing the very AI technologies that power these agents. Compounding this risk is the human factor, still the greatest Achilles' heel for most enterprises. For organizations exploring AI agents today, vigilance is non-negotiable.

Even long-standing cybersecurity tools and strategies are insufficient against the unique manipulation threats agents face. Enterprises must fundamentally revamp their security frameworks to integrate safeguards across every layer, from data governance and identity controls to observability and human intervention.

Not every organization will build its own LLM as ManageEngine has with Zia. Small and medium-sized businesses, in particular, will inevitably rely on third-party LLM vendors. That reliance, however, must be paired with rigorous vetting, multi-layered testing, and continuous monitoring to ensure no hidden weaknesses creep in. Regardless of size, stringent IAM practices and comprehensive observability are indispensable for compliance and security.

The future of agentic AI is not about blind adoption but about responsible integration. Enterprises that balance innovation with security will be the ones that reap the benefits of AI agents without falling prey to their risks.

About

As the IT management division of Zoho Corporation, ManageEngine prioritizes flexible solutions that work for all businesses, regardless of size or budget. ManageEngine crafts comprehensive IT management software with a focus on making your job easier. Our 120+ award-winning products and free tools cover everything your IT needs. From network and device management to security and service desk software, we’re bringing IT together for an integrated, overarching approach to optimize your IT.

About the author

Arjun has nearly a decade of experience in content marketing, with a focus on long-form content. He has worked across multiple roles, building expertise in translating complex technology concepts into clear and well-structured narratives. His work involves researching complex topics and presenting them as thought leadership content for IT leaders. Outside of work, Arjun is an avid reader. He’s also a sports enthusiast and always game for a match, a discussion, or enjoying the matches on television.