Enterprise data storage rapidly grows in volume and density year after year. However, not all of this data is business critical. Over 70% of the data stored by an organization is redundant, as stated by the Association for Intelligent Information (AIIM).

Of these, redundant/duplicate files are the most difficult to handle for a few reasons:

- Duplicate file accumulation: Duplicate files accumulate rapidly in file storage environments. This is unavoidable and is the result of assorted factors ranging from copying folders across drives to taking multiple backups.

- Identification of duplicate copies: Duplicate copies of files are incredibly easy to create, but finding them across multiple storage environments is tedious.

- Managing duplicate files: Redundant data takes up valuable space and must be deleted, or at least moved to secondary storage. Considering the volume of duplicate files, this is easier said than done.

How DataSecurity Plus can help find and delete duplicate files

ManageEngine DataSecurity Plus helps analyze file metadata and report on duplicate files across Windows file servers, SMB file servers, and workgroup environments. It also allows you to delete duplicate copies directly from the dashboard for quick and easy storage optimization. Here’s what you can do:

- Find duplicate files.

- Delete duplicate files.

- Track duplicate file deletions.

Finding duplicate files using DataSecurity Plus

To configure DataSecurity Plus to find duplicate files:

- Download and install DataSecurity Plus.

- Open the DataSecurity Plus console.

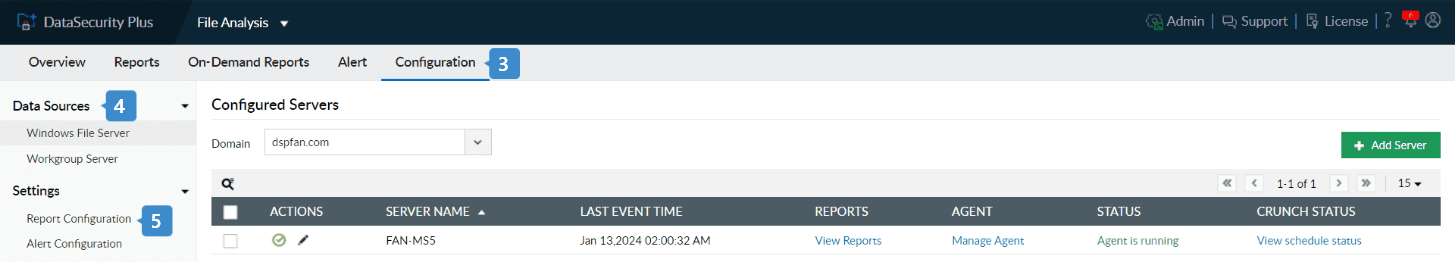

- Navigate to File Analysis > Configuration.

- Under Data Sources, select the type of storage (Windows File Server or Workgroup Server) that you want to add.

- To add Windows file servers

- Select Windows File Server and click + Add Server in the top-right corner.

- Select the Domain.

- Click the + symbol next to Select Server. Choose the servers you want to add, and click Select.

- Choose whether you want to analyze all drives or only specific files in the selected server(s).

- Click Install Agent and Finish.

- To add Workgroup servers

- Select Workgroup Server, and click + Add Workgroup in the top-right corner.

- Click the + next to the Select Server field, and select the server you want to audit.

- Select the objects to be monitored.

To audit shares: Choose one or more shares to be audited.

To audit sub folders, local folders, or local files: Enter their respective paths.

- Click Install Agent and Finish.

- To add Windows file servers

- Go to File Analysis > Configuration > Settings > Report Configuration, and click the edit icon next to Duplicate Files Report.

- Choose the parameters by which you want to pinpoint duplicate files. You can choose from Same size, Same name, and Same last modification time.

Tip: For the most accurate findings, we recommend selecting all three parameters.

- Click Save.

DataSecurity Plus can now find and report on duplicate files in your domain. You can view useful breakdowns on the file type composition of detected duplicate files under the Storage Overview tab, along with details on how much space is taken up by them.

Deleting duplicate files in DataSecurity Plus

To delete detected duplicate files, follow the steps below:

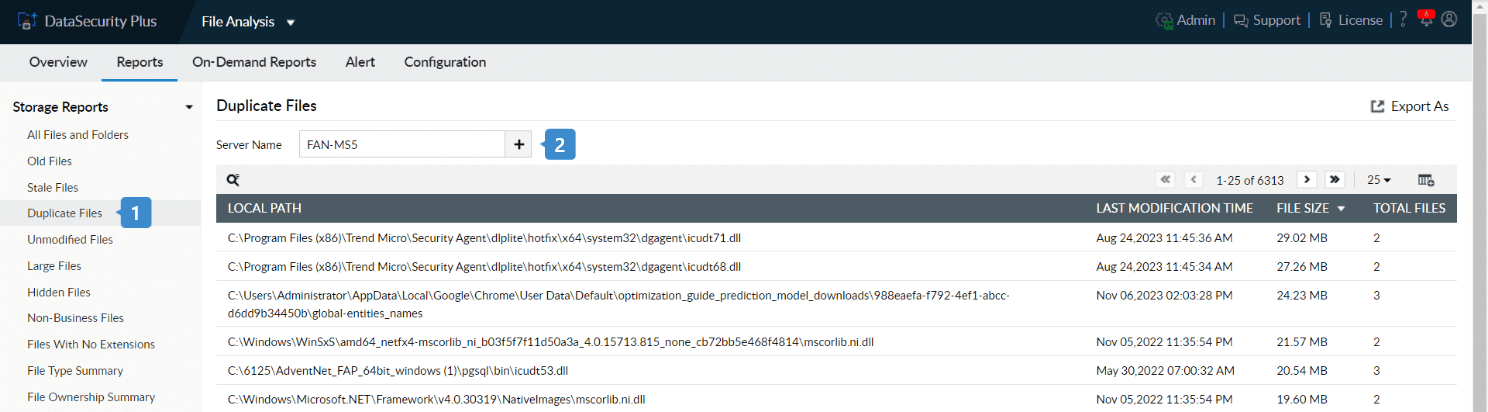

- Go to File Analysis > Reports > Storage Reports > Duplicate Files.

- Click the + next to the Server Name field.

- In the pop-up, choose the server in which you want to find duplicate files.

- Click Select.

- Once loaded, the report shows the files that have multiple copies in the selected server, along with the number of duplicate copies.

- Click on the report entry of a file that has duplicates you want to delete.

- In the pop-up, check the copies you want to delete, and click the delete icon.

- Click Yes in the confirmation pop-up.

Tracking duplicate file deletions

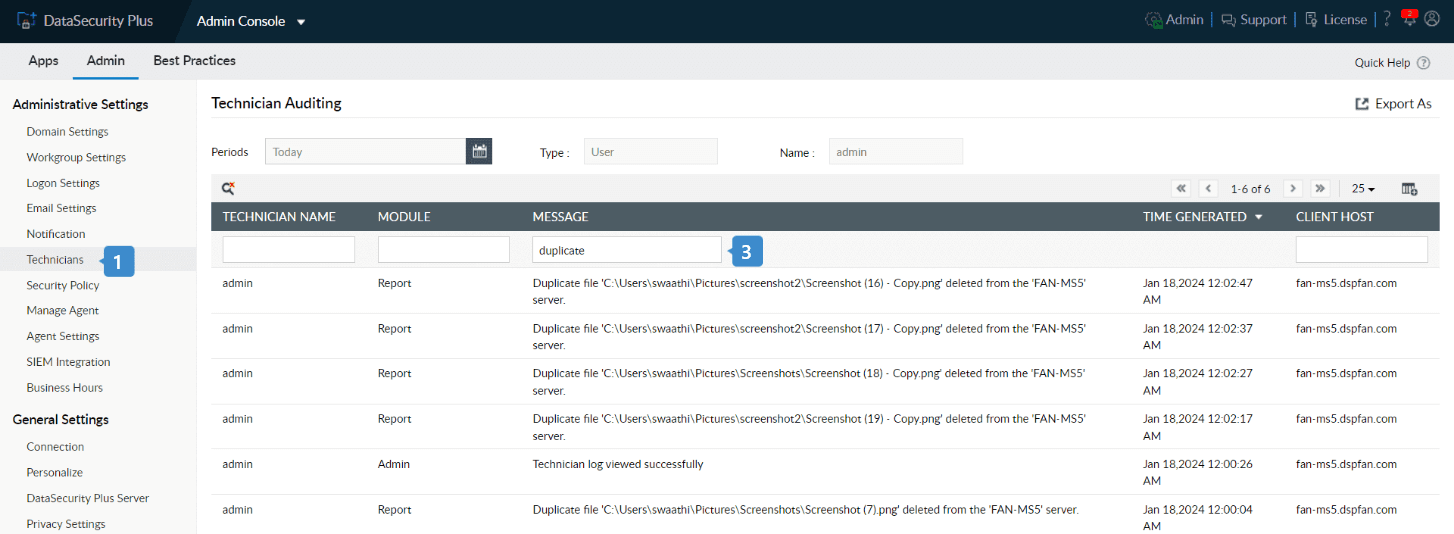

When a duplicate file is deleted with DataSecurity Plus, the event will be recorded in the technician audit log.

To view this, follow the steps below:

- Navigate to Admin Console > Admin > Administrative Settings > Technicians.

- Under the Audit Log column, click View.

- The audit log of all actions carried out by the technician will be listed. To list only file deletions:

- Click the search icon.

- Under Message, type duplicate and hit Enter.

This will let you view details on who deleted what duplicate file, when, and from where.

Frequently asked questions

-

1. How do I identify a duplicate file?

To identify duplicate files, you can analyze the actual file content rather than just the file names. The system compares file sizes and generates content-based hash values to determine whether files are identical. If both the file size and hash value match, the files are considered duplicates, even if they are stored in different folders or locations within the file system.

-

2. What are the financial implications of storing duplicate files?

While cloud storage offers virtually unlimited capacity, storing redundant files can drive up costs unnecessarily. Identifying and managing duplicates helps optimize storage and reduce expenses. Use our ROT data calculator to get a personalized estimate of how much you could save through better storage practices.

-

3. Can duplicate files lead to security lapses?

Yes. Duplicate files can increase the risk of unauthorized access or data leakage, as multiple copies may exist in different locations with varying permissions. Identifying and managing duplicates helps maintain tighter control over sensitive data and strengthens overall file security.

-

4. What are some best practices for managing duplicates files?

To effectively manage duplicate data, organizations should run regular duplicate scans, review file ownership and access patterns before deletion, and prioritize cleanup in high-growth or high-usage areas. It's also important to retain or archive duplicates when required for business or compliance reasons. Performing cleanup in phases, maintaining recent backups, and auditing all deletion activities helps ensure safe and controlled duplicate data management.

-

5. What should be reviewed before permanently deleting duplicate files?

Before deleting duplicate files, organizations should review file ownership, last accessed and modified timestamps, file location, and business relevance. Validating that a retained primary copy exists and confirming recent backups helps reduce the risk of accidental data loss during cleanup.