Chapter 4: Sustainable infrastructure design

In the 1940s, the world’s first data center, ENIAC, consumed about 150 kilowatts of power. For comparison, that’s enough power to run about 100–150 modern desktop computers continuously, making ENIAC not only groundbreaking but also one of the earliest examples of high-energy computing infrastructure. We’ve come a long way since then, but infrastructure design continues to be one of the key determinants in how environmentally responsible technology can be.

Sustainable data center management

A green data center is one that minimizes its environmental impact by reducing energy consumption, lowering carbon emissions, and optimizing resource use. The goal is to ensure efficient operation while being environmentally responsible.

Data centers are an environmental concern due to their substantial energy consumption and associated greenhouse gas emissions. Recent estimates from the World Economic Forum indicate that data centers account for approximately 2% of global electricity use and nearly 1% of worldwide greenhouse gas emissions. To put that in perspective, these numbers are comparable to the emissions of the global aviation industry, which uses 3% of global electricity.

This rising consumption, exacerbated by AI advancements, poses a serious challenge to global sustainability efforts. As organizations prioritize and work towards carbon neutrality, investing in green data centers becomes a critical piece of the puzzle. This means following practices outlined above, like using energy-efficient cooling systems, running on renewable energy, utilizing a closed loop for water consumption, and using recycled or sustainable materials to build the data center.

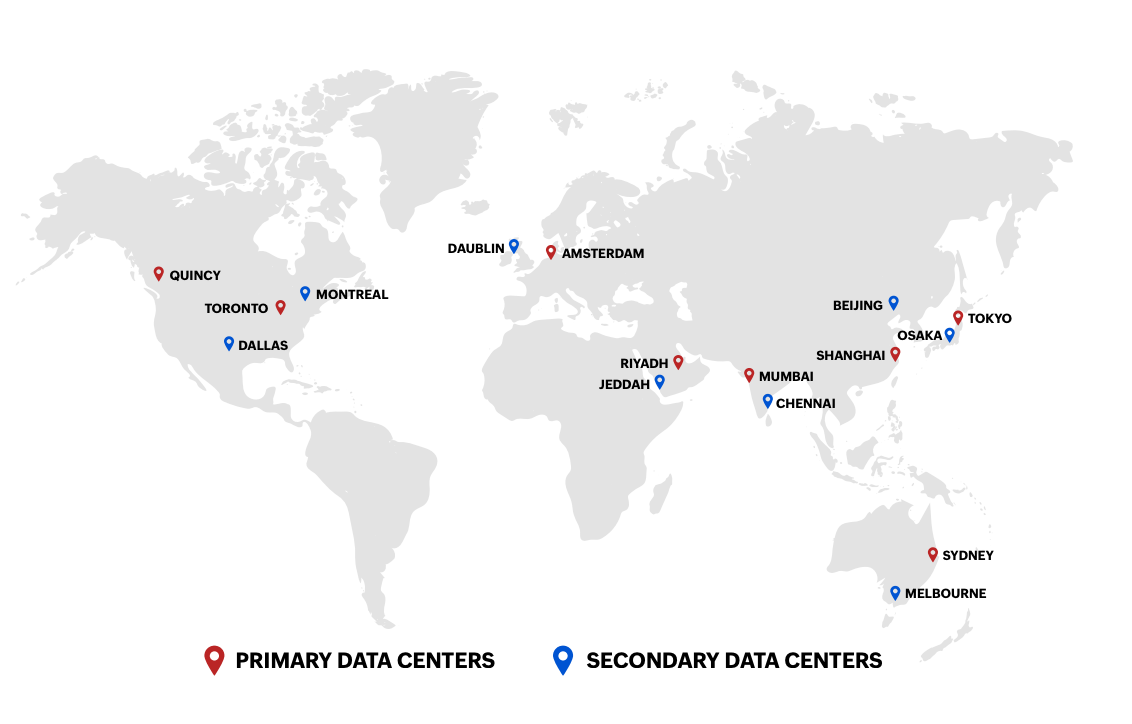

As of September 2024, Zoho Corp. operates over 18 data centers worldwide, with plans to establish data centers in almost every country by 2030. These data centers are certified with various international standards, including ISO 27001, HIPAA, and SOC Type II, ensuring high levels of security and compliance for our customers.

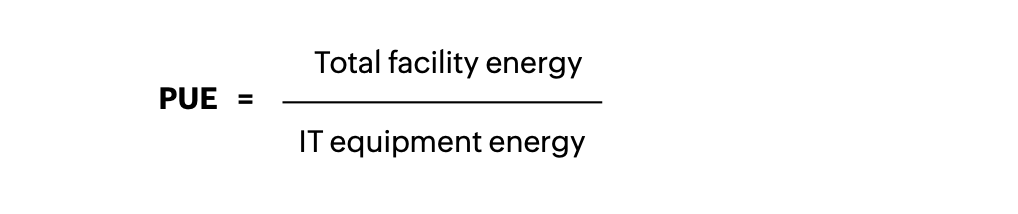

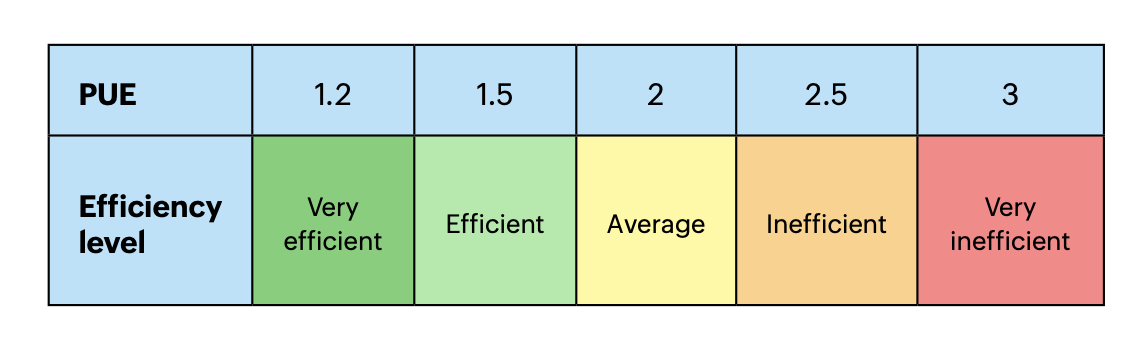

Energy efficiency in data centers is primarily measured using power usage effectiveness (PUE), which is calculated as:

- Total facility energy consumption includes all energy used in the data center, including cooling, power distribution, and lighting.

- IT equipment energy consumption refers to the energy consumed by servers, storage devices, and networking equipment.

A perfectly efficient data center would have a PUE of 1.0, meaning all energy is used solely by the IT equipment. However, in reality, this number is nearly impossible to achieve. Highly efficient data centers have a PUE close to 1.1–1.4. Traditional data centers typically range from 1.8–2.5, meaning a significant portion of energy is lost to cooling and infrastructure inefficiencies.

Energy-efficient cooling techniques for data center management

Sustainably managed or green data centers implement energy-efficient cooling techniques to reduce power consumption and minimize environmental impact. Let’s take a quick look at some of these systems.

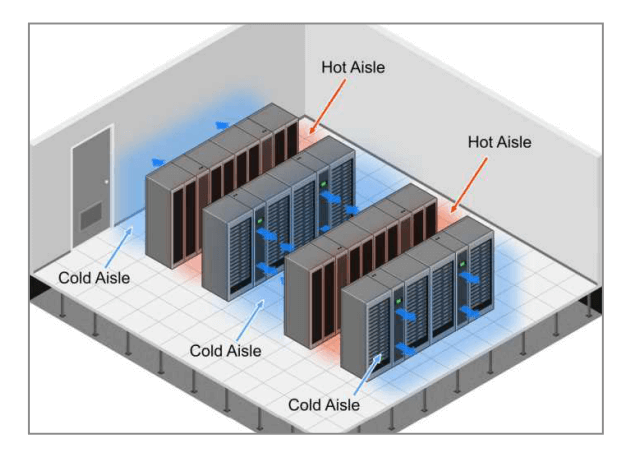

Hot/cold aisle containment

This system separates hot and cold airflows within the data center by aligning racks to direct cool air to the equipment intake and hot air to exhausts. It prevents hot air from recirculating, improving cooling efficiency.

Example: Zoho Corp.’s data center in Chennai uses hot/cold aisle containment.

Free cooling

This system uses cool outside air or cold water sources (such as lakes or underground aquifers) to cool the data center without running traditional cooling systems. It reduces reliance on energy-intensive air conditioning (i.e., HVAC) systems.

Example: Google’s data centers in Finland use seawater for cooling.

Evaporative cooling (Adiabatic cooling)

This system uses water evaporation to absorb heat and cool the air before it enters the data center. It requires significantly less energy than traditional air conditioning.

Example: Facebook’s data center in Sweden uses evaporative cooling in combination with free cooling.

Some other notable techniques, such as solar cooling, geothermal cooling, and AI-driven cooling optimization, are also used by companies to manage data centers sustainably.

Green data center practices

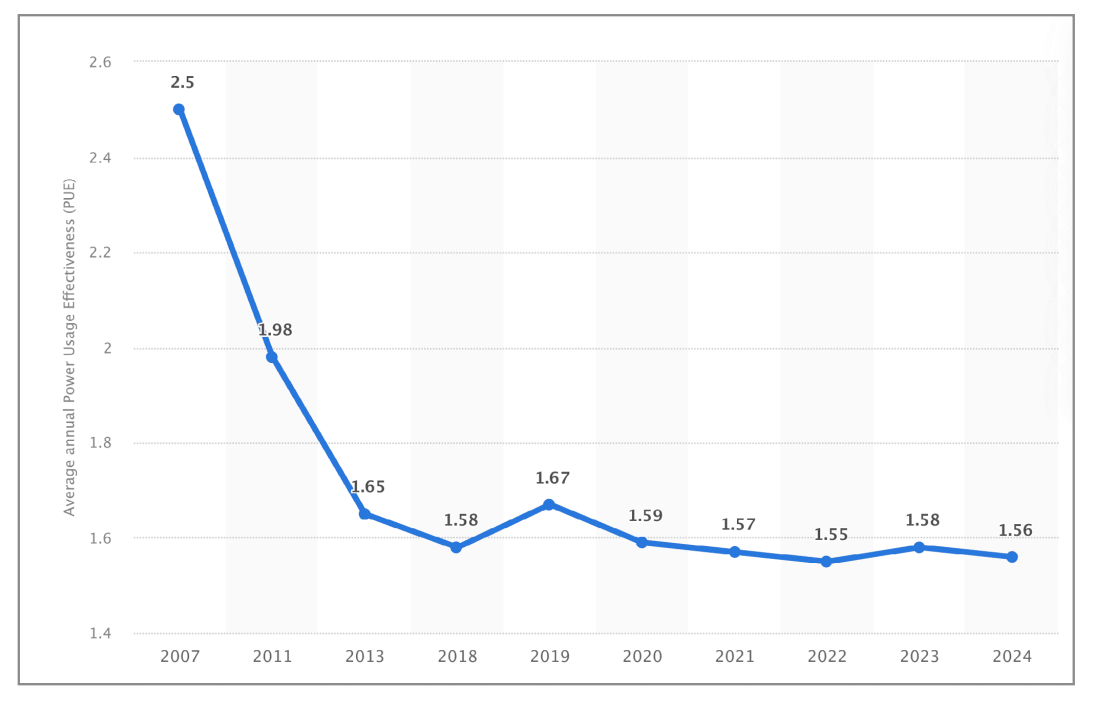

The good news? Businesses worldwide are actively taking efforts to boost their data center efficiency. A 2024 survey for data center owners and operators showed a significant improvement in the average annual PUE, which means a smaller share of total power is being used to run secondary functions like cooling.

Businesses that build their own data centers have the opportunity to prioritize sustainability for long-term gains. This means an increased focus on factors like:

- Maximized infrastructural potential: The first step is to select a location that is suitable in terms of temperature and access to energy sources. Proximity to users can further help reduce energy consumption in data transmission. Construction using LEED-certified materials (which we’ll discuss in the next section) and optimizing floor space to ensure better airflow and reduce excess cooling needs makes a big impact.

- Energy efficiency: A PUE between 1.2-1.5 is considered ideal. Businesses can optimize cooling systems, upgrade to energy-efficient hardware, and use AI-driven power management to reduce waste.

- Renewable energy: Businesses should consider a transition to solar, wind, or hydroelectric power instead of fossil fuels, especially if on-site energy generation is an option. Some businesses also choose to offset carbon emissions by investing in carbon credits or green power purchase agreements (PPAs).

- Operational modifications: Implementing suitable cooling system techniques, using smart sensors to adjust cooling, and setting up closedloop water usage systems can limit the consumption of unnecessary resources. Data centers can also use energy-efficient lighting, insulation, and building automation to lower energy use

For businesses that opt for colocation services, i.e., renting space and power from third parties, it is important to set high sustainability standards and seek vendors who adhere to them. In India, the Ministry of Electronics and Information Technology (MeitY) is working on setting benchmarks for energy efficiency in data centers. For instance, the ministry has proposed a PUE benchmark of less than 1.35 for data centers.

At a global level, the Uptime Institute sets industry standards for data center design, reliability, and efficiency. It established the tier classification system that is now followed in many regions such as the US, Europe, and Asia.

| Tier level | Uptime (%) | Max downtime (annually) | Infrastructure | Redundancy | Suitability |

|---|---|---|---|---|---|

|

Tier I |

99.671% |

~28.8 hours |

Single path for power and cooling |

No redundancy |

Small businesses with minimal IT needs |

|

Tier II |

99.741 % |

~22 hours |

Single path with some backup components |

Partial redundancy |

Mid-sized businesses needing improved reliability |

|

Tier III |

99.982 % |

~1.6 hours |

Multiple power and cooling paths (only one active at a time) |

Full redundancy for maintenance |

Enterprises, SaaS providers, financial institutions |

|

Tier IV |

99.995 % |

~26.3 minutes |

Multiple active power and cooling paths |

Fully faulttolerant; no single point of failure |

Banks, stock exchanges, healthcare |

Each tier builds upon the previous one, enhancing redundancy and reducing potential downtime. Higher tiers mean greater uptime but increased costs. Tier III is the ideal space for most enterprises, balancing uptime and cost. Tier IV data centers offer the added advantage of using an N+N model, which is a fully redundant infrastructure where all critical systems are duplicated. N represents the exact number of components (like power supplies or cooling units) required to support operations. With this model, service providers can ensure that if one system fails, an identical backup system takes over immediately without affecting operations.

Use case: Managing energy consumption in IT infrastructure

A London-based financial services company operates a large IT infrastructure, including network devices, servers, and a Tier III data center. With energy prices in the United Kingdom hitting record highs and stringent environmental regulations, the organization seeks a solution to monitor, manage, and optimize energy consumption while maintaining compliance and operational efficiency.

Challenges

With the UK experiencing volatile energy prices, the organization’s monthly electricity bill for the data center alone exceeds £50,000. The IT team suspects inefficiencies in server utilization and cooling systems but is unable to identify specific problem areas. The excessive energy costs reduce profitability and strain the organization’s IT budget, limiting its investment in other critical areas.

During non-peak hours (e.g., late nights and weekends), servers and network devices continue operating at full capacity. These systems run idle workloads, consuming power unnecessarily. This means the organization wastes significant energy, with the estimated idle power consumption accounting for 30% of the total energy bill.

The core issue is the lack of centralized visibility. The organization operates branch offices across Europe. Energy consumption data is siloed across multiple systems, making it difficult to gain a unified view of the IT infrastructure. Additionally, the UK government’s commitment to achieving net-zero emissions by 2050 imposes regulatory pressure on the organization to adopt green IT practices. Without proper means to assess its energy usage and emissions, the company could face penalties, reputational damage, and missed opportunities to attract eco-conscious clients.

Implementation

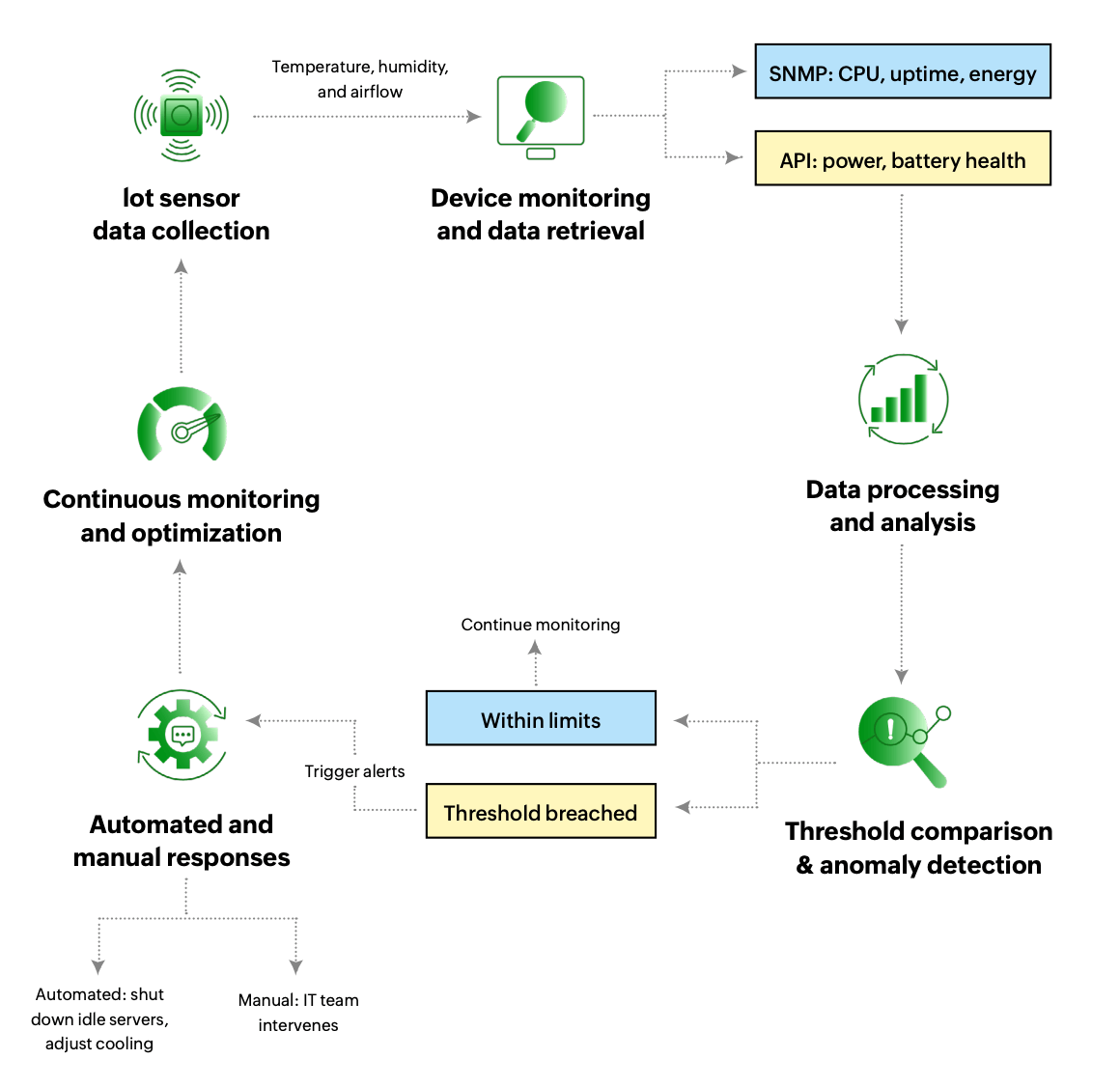

Action 1: The first issue the company must tackle is energy inefficiencies in the data centers through real-time monitoring. A network and server monitoring tool is crucial for this process. The tool is integrated with IoT sensors installed across racks to monitor temperature, humidity, and airflow. Devices are equipped with SNMP agents to provide data about their operational parameters, including energy usage, CPU load, and device uptime. The tool then queries these devices using SNMP to collect energy-related metrics. APIs allow the tool to pull real-time energy consumption data, such as power draw, temperature, and battery health. Ultimately, the tool identifies uneven cooling contributing to inefficiencies.

A proactive approach is also a must-have to stay ahead of inefficiencies. The IT team configures custom thresholds for acceptable temperature and energy usage levels and sets up alerts to notify the team of any breaches. Idle servers are automatically scheduled to shut down during low-demand hours. To optimize the workload further, underutilized servers are identified and consolidated, reducing the number of active machines. Server virtualization is implemented, reallocating workloads to fewer, more energy-efficient servers during non-peak hours.

Action 2: The company relies on independent energy monitoring systems across branches, hindering decision-makers from getting a high-level view of the overall consumption. Having a single tool implemented across all branches would allow leadership to gain unified visibility on a centralized dashboard and make decisions accordingly.

The greatest advantage to this approach is the cross-location comparison. Historical data from each location can be analyzed to identify variances in energy usage. Let’s say a branch office in central London is flagged for excessive energy use due to outdated cooling systems. The IT team can prioritize an upgrade, leveraging insights from energy-efficient branches to implement best practices.

Lastly, the tool can also assist with sustainability reporting and compliance. ManageEngine’s server management tool has a reporting module that allows the company to map energy consumption to carbon emissions. Reports generated monthly help the organization demonstrate compliance with UK environmental standards and track progress toward its net-zero goals. With this strategy, the firm addresses three crucial concepts that can save their business millions in expenses: insights, automation, and scalability.