The growing global focus on data centers highlights their dual role: as critical drivers of digital transformation and major consumers of energy. Today, they operate under mounting pressure to manage exploding data volumes, expanding edge deployments, and rising sustainability mandates.

If you think your traditional monitoring strategies are good enough, it's best to think if that alone is enough. Many industry events have underscored the consequences of inadequate visibility including but not limited to unplanned outages, service degradation, and energy inefficiencies that directly impact cost and reputation.

For example, one failed rack sensor, one cooling fault, one rogue power draw can start a cascade of downtime and hurt your brand before you notice this domino effect. So here are the best practices you should follow not to pay for the risk, cost, reputation and strategic disadvantage.

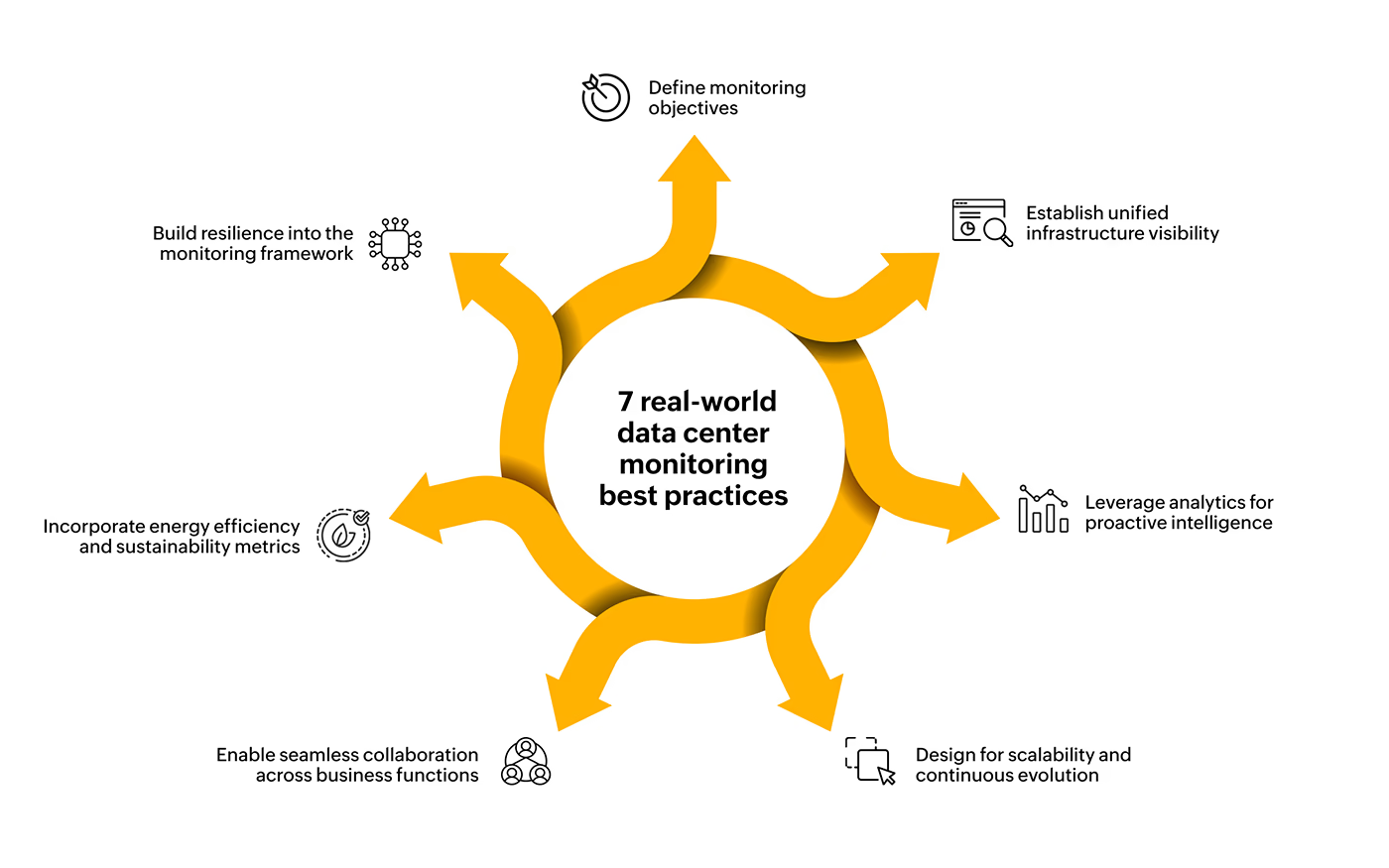

Monitoring a data center isn’t a one-size-fits-all exercise. Each organization must align monitoring goals with its core business priorities whether ensuring high availability, maintaining compliance, or optimizing energy efficiency. The first step is to establish measurable objectives such as uptime thresholds, service-level expectations, and power utilization targets.

These objectives should then be tied to measurable operational metrics such as response time, Power Usage Effectiveness (PUE), latency, or packet loss rates to create a clear performance baseline. From there, organizations can define escalation paths and automated remediation workflows to ensure that any deviation from these benchmarks is addressed swiftly. This structured, metrics-driven approach not only keeps monitoring aligned with business goals but also helps teams prioritize efforts where they deliver the most value.

Modern data centers function as tightly interwoven ecosystems where servers, storage, networks, applications, and facilities continuously interact. Restricting visibility to one layer can create blind spots and delay root-cause identification. A best practice is to establish a monitoring mix that considers both parent-child dependencies.

For example, you can view how application issues cascade down to network devices and optimal polling intervals to balance data freshness with resource efficiency. Unifying metrics from all layers such as server and virtualization health, network latency, application performance, and environmental factors including temperature, humidity, and power distribution within a single framework eliminates siloed troubleshooting, reduces MTTR, and provides an accurate, end-to-end view of data center health.

As workloads fluctuate and dependencies grow, static rules often trigger floods of irrelevant alerts or miss early warning signs altogether. Intelligent monitoring powered by behavior analysis and AI addresses this challenge by learning from historical trends and identifying anomalies before they escalate into incidents.

By correlating related events and adapting thresholds dynamically based on usage patterns or seasonal variations, predictive analytics helps teams anticipate issues rather than react to them. This shift from reactive monitoring to proactive insight minimizes false positives, reduces alert fatigue, and ensures that operations teams can focus on preventing disruptions instead of constantly firefighting them.

As organizations adopt edge computing, containerized workloads, and multi-cloud environments, monitoring systems must scale and evolve accordingly. Infrastructures today are dynamic. New devices and services frequently appear, and capacity needs fluctuate. To stay ahead, IT teams should pair forecasting and capacity planning directly with business objectives, ensuring resources scale predictively rather than reactively.

Monitoring data holds value only when it’s actionable. And action depends on context. Role-based dashboards enable IT teams to focus on granular metrics, while business stakeholders monitor service health and impact. Beyond visibility, operational alignment requires deeper integration between monitoring and IT service management.

Synchronizing the two through auto-ticketing, CMDB relationship mapping, and correlation across IT tools allows incidents to be captured, categorized, and resolved faster. Defined escalation paths and transparent collaboration among IT, facilities, and business teams reduce miscommunication and strengthen organizational resilience.

Over time, analyzing these patterns supports accurate capacity planning, sustainability reporting, and cost reduction through smarter power management. Energy-aware monitoring not only improves operational efficiency but also aligns IT performance with organizational sustainability goals.

Even the most advanced monitoring setup can become a single point of failure if it isn’t continuously validated. Building redundancy and failover mechanisms ensures that even if a collector or monitoring node fails, another one seamlessly takes over without losing visibility. This not only minimizes blind spots but also helps maintain consistent service-level awareness during outages or maintenance windows.

Additionally, maintaining audit-ready logs and compliance checkpoints is critical in regulated environments. These records provide traceability for performance data, configuration changes, and user actions, thereby helping IT teams prove compliance and investigate anomalies more effectively.

Today’s data centers are more heterogeneous than ever, blending legacy infrastructure with virtualized, containerized, and cloud-native environments. As operations scale and security stakes rise, organizations can no longer afford fragmented visibility or delayed responses. Modern IT teams need Data Center Infrastructure Management (DCIM) tools that unify these moving parts into a single, intelligent view.

OpManager as a robust advanced data center monitoring tool brings the mentioned best practices together by offering unified monitoring across IT, network, and environmental parameters. With its scalable architecture, distributed collectors, and intelligent alerting, OpManager helps organizations build the kind of resilient, end-to-end monitoring framework modern data centers demand.

The focus is shifting from merely collecting data to interpreting it intelligently, turning visibility into resilience, and resilience into sustained business reliability. Is your business keeping up with this evolution? If not, OpManager can help!