The agility of containerized applications must be matched by the precision of their oversight. As Docker Swarm clusters scale to meet the demands of hybrid and remote workforces, manual monitoring becomes a liability.

ManageEngine Applications Manager delivers an agentless, enterprise-grade monitoring solution designed to provide comprehensive visibility into Swarm architectures. By utilizing lightweight API polling, it eliminates the overhead of agent management while providing deep-tier insights—from high-level executive summaries down to granular task metrics.

Deep-tier visibility and performance optimization

Visibility is only as professional as the insights it generates. ManageEngine Applications Manager provides a multi-layered view of your container ecosystem, ensuring every resource is utilized to its peak potential. By centralizing these critical data points, Applications Manager helps you achieve the following operational benefits:

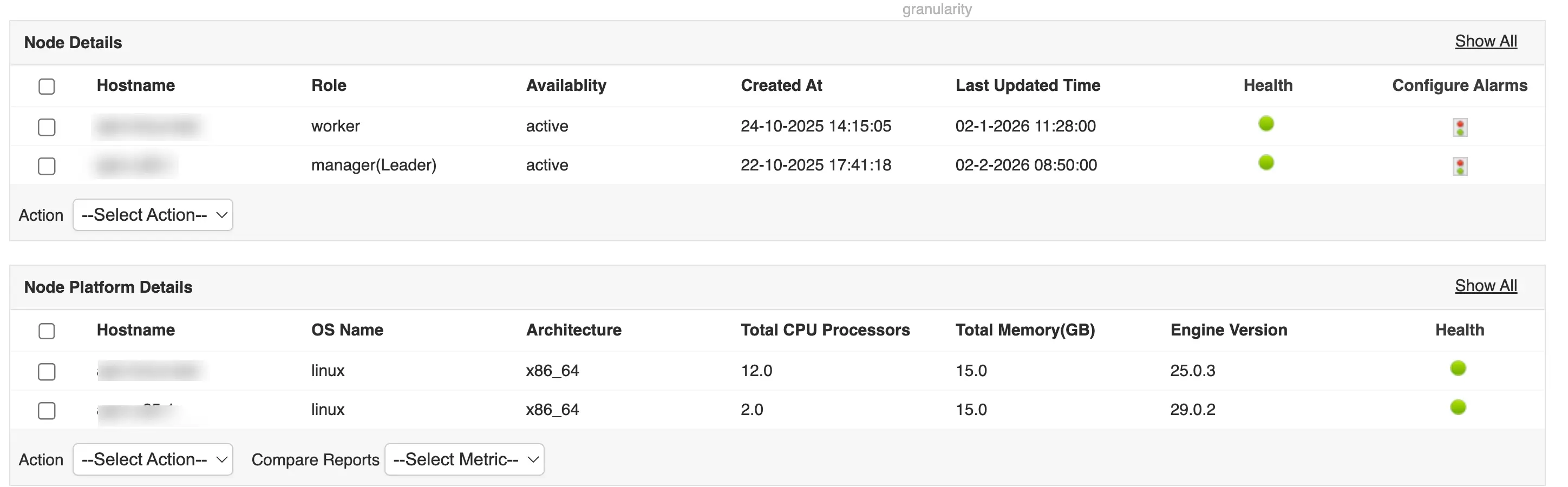

Ensure structural integrity by monitoring cluster and node metrics

The health of your swarm begins at the host level. Monitoring these core components ensures the foundation of your cluster remains stable. Applications Manager facilitates this by tracking:

- Manager quorum status: Prevent "split-brain" scenarios and total cluster collapse. Monitoring manager health ensures the Raft consensus remains intact so the cluster can continue to process deployment commands.

- Node availability: High availability is the primary objective of any swarm. Applications Manager allows for the immediate detection of hardware or network failures, triggering the manager to reschedule tasks to healthy nodes instantly.

Guarantee service availability

Services represent your application's desired state. Monitoring these metrics ensures that your actual runtime environment consistently matches your configuration.

- Replica count: If you define five replicas but only three are functional, your application is at risk. Monitoring this metric catches "silent failures" where services are technically active but significantly under-provisioned.

- Task health and restart loops: Constant task restarts often indicate a misconfiguration or a "leaky" image. Applications Manager tracks restart frequency to help isolate code-level bugs or environment incompatibilities before they cause a cascading failure.

- Service response latency: High latency across a service typically indicates an overloaded overlay network or a database bottleneck. Monitoring this is critical for maintaining your user experience (UX) SLAs.

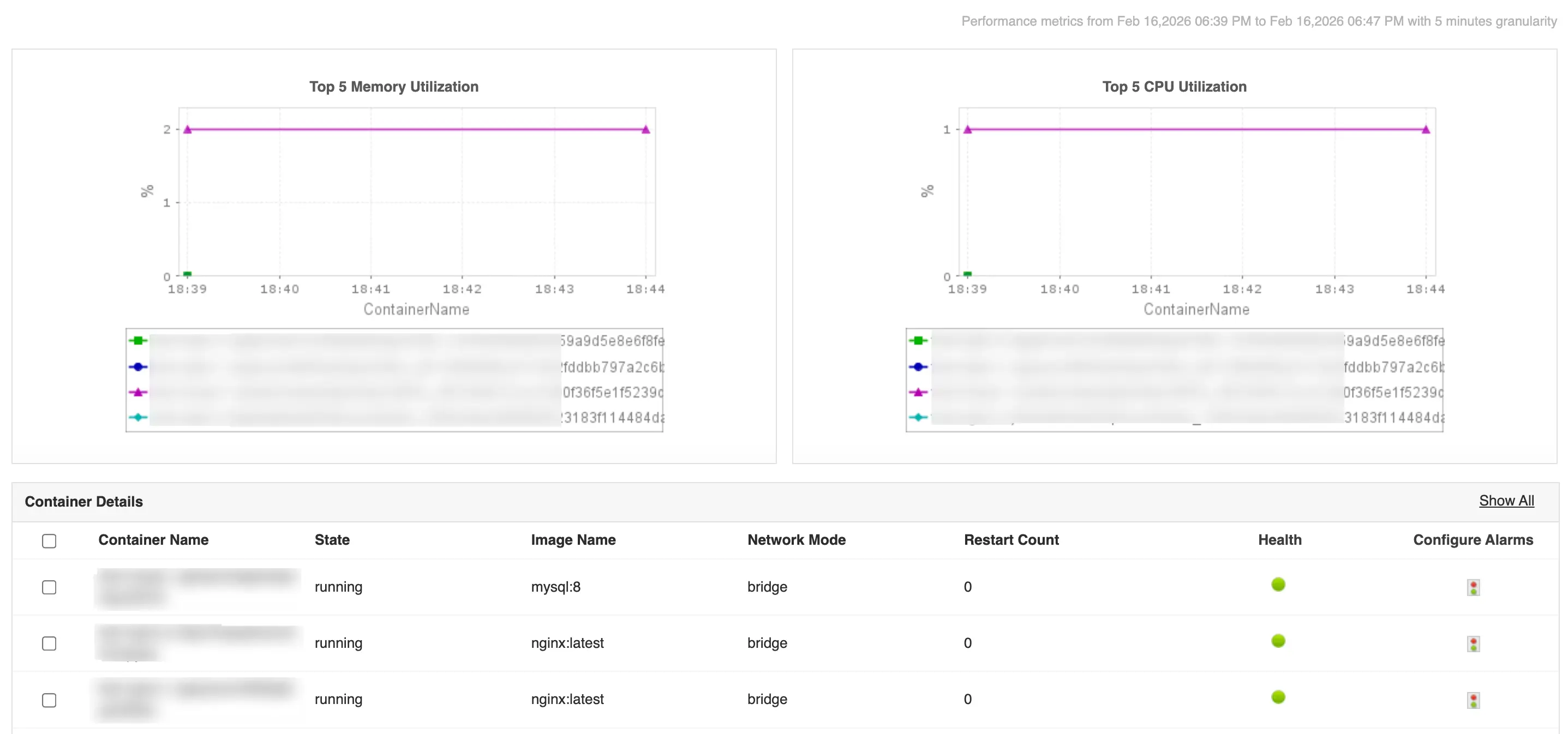

Granular performance tuning with container-level metrics

This is the intersection of FinOps and technical performance. Applications Manager's container monitoring capability provides the granularity needed to bridge the gap between resource spending and application speed.

- CPU throttling: A container may remain under its defined limit but still experience throttling by the Docker engine, causing sluggish performance. Tracking these events helps you fine-tune CPU shares for mission-critical microservices.

- Memory usage and OOM kills: Out-of-memory (OOM) events are the leading cause of sudden container termination. Monitoring memory trends allows you to set precise limits, preventing a single container from crashing an entire host.

- Network I/O and error rates: In microservices architectures, internal traffic is dense. Monitoring container-level network I/O helps identify congestion or communication failures between services residing on different nodes.

Image and storage metrics for governance and security

Applications Manager's Docker Swarm monitor extends its reach into the storage and security layers to ensure long-term cluster health.

- Image vulnerability and size: Monitoring swarm images ensures that only authorized, secure versions are in production.

- Disk I/O and volume usage: While containers are ephemeral, their data is not. Monitoring disk I/O prevents storage bottlenecks that could otherwise freeze database containers or critical logging services.

Advanced analytics with AI based reports

One of the most significant advantages of using Applications Manager is the ability to look into the future of your infrastructure. Instead of reacting to a full disk or an overloaded node, you can utilize:

- Machine learning forecasts: Utilize historical data to predict exactly when your cluster will reach its capacity limits.

- Informed scaling: Detailed reports indicate which nodes are over-utilized and which are idle, allowing for precise rebalancing of your swarm.

- Budgetary foresight: Use growth trend data to justify infrastructure upgrades well before they become an emergency.

The agentless advantage

Traditional monitoring is often hindered by "agent sprawl"—the resource-heavy requirement of installing software on every node. Our solution redefines scalability through a lightweight, API-first approach.

- Accelerated onboarding: Deploy in under 5 minutes via the Docker Remote API.

- Zero-overhead scaling: Effortlessly monitor massive clusters (1000+ nodes) without degrading host performance.

- Dynamic dependency mapping: Automatically discover and map service relationships, which reduces manual configuration by 90%.

Ecosystem integration & governance

Applications Manager acts as the central intelligence hub for your DevOps and IT Management (ITSM) stacks.

- Multi-channel alerting: Instant notifications via Email, Slack, or direct ITSM integration with automated escalation paths.

- Full-stack observability: Correlate infrastructure health with application-layer traces (Java, .NET, SQL) for true end-to-end visibility.

- Compliance & reporting: Generate executive-ready PDF reports on uptime SLAs and image vulnerability scans to satisfy rigorous audit requirements.

Investing in professional Docker monitoring is an investment in business continuity. By eliminating downtime, optimizing cloud spend, and empowering IT teams with AI-driven insights, ManageEngine ensures your infrastructure remains a competitive advantage rather than an operational burden.